PowerPoint endures, mobile apps look secure

Editor's note: Tim Macer is managing director, and Sheila Wilson is an associate at meaning ltd., the U.K.-based research software consultancy that carried out the study on which this article is based.

Now in its ninth year, the 2012 iteration of the Confirmit Market Research Software Survey of software and technology among market research companies finds an industry not only dependent on technology but adjusting its activities around the new opportunities technology presents.

In addition to tracking major trends, the study delves into some the hottest topics each year. This year, that includes incentivized panels, interview quality measures, survey length and the impact of mobile.

For the first time, readers of Quirk’s were also invited to join the survey, which has helped boost response this year to a record 250 participating companies in 37 countries. As before, we only include responses from IT decision-makers or decision-influencers within market research companies in the sample and include only one response from each company, as our target is companies, not individuals.

Results are presented unweighted and we advise that you consider all figures reported to be indicative in nature and thus not to be used as a basis for projections. In this article, we have picked out a few highlights from the full report, which you can download at meaning.uk.com/mrss12.

Research modes in use

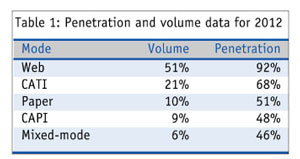

Since 2006 the survey has tracked the relative volumes of research work done by mode. While the dominance of the major modes remains largely unchanged, evidence is stacking up that some of the newer research approaches are now coming more to the forefront. Overall, survey estimates for the four principal data collection modes, plus mixed-mode, are little changed from previous years – although paper is continuing on its gradual downward slide, from 21 percent of fieldwork in 2006 to just 10 percent today. Together, they account for 97 percent of the fieldwork volumes reported in the survey.

By asking the volume of work done, we can also estimate the penetration of each mode – how many firms are tooled up to offer that method.

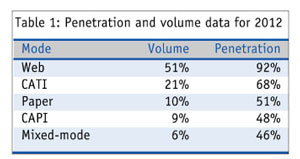

Although the numbers are small – and therefore prone to variation – the trend for minority data collection modes shows some interesting changes. Volumes remain low. Mobile self-completion is a bare 2 percent, though this is up from previous years – until now it has hovered at or around 1 percent. But more companies are now offering these specialist data collection modes, as Figure 1 shows.

Online sample sources

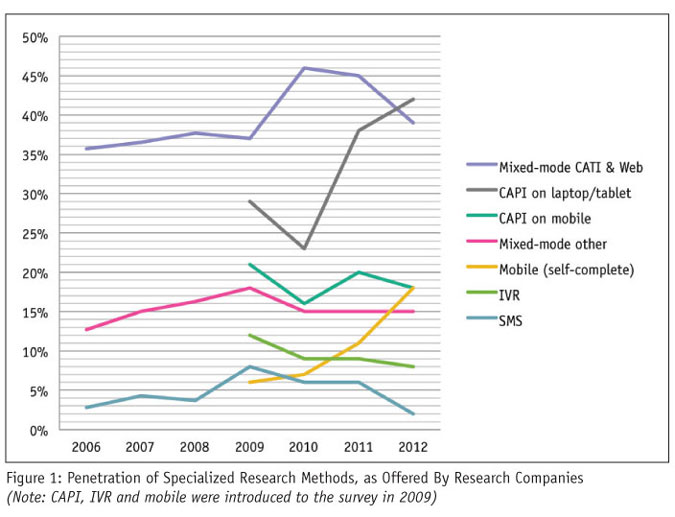

We were intrigued this year to notice that the “others” response to the question “Which sources of online sample do you use?” seems to have taken an upward turn (Figure 2). Most years somewhere around 10 percent of companies said they used “other” sample sources but in 2011 it was 17 percent and in 2012 24 percent.

This prompted us to do some specific follow-up with these companies, from which we learned that researchers are being ever more resourceful in finding ways to reach survey populations. One researcher working at a large company told us that he uses old-fashioned snail mail with a survey link. Our contacts also mentioned diverse places where recruitment adverts can be placed, such as social media, magazines and Web sites related to the subject of the survey, etc.

Having said this, our “other” sample sources represent only 4 percent of the total volume of sample used. Access panels remain predominant and they are slowly taking a larger slice of the cake – in 2006, 29 percent of sample was sourced from access panels and in 2012, it is 35 percent. This increase seems to be replacing missing volume from companies’ own panels, where the share has gradually fallen from 30 percent to 24 percent in the same time period.

Incentivized panel use

In the light of recent controversies over the reliability of surveys from incentivized panels and the investigations by industry bodies such as CASRO and ESOMAR, we focused several questions for 2012 on panels and the panel quality measures that operators use.

In one question, we established that panels with incentives account for the majority of online research – our estimate is 63 percent overall and 66 percent specifically in North America. A quarter of companies claim to use incentivized panels for all of their online research.

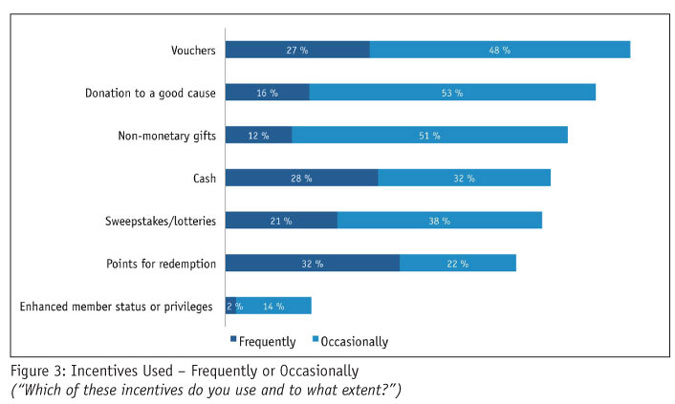

Near-cash incentives and vouchers are the most prevalent overall, with 75 percent of companies using them (Figure 3). The very similar method of accumulating points until a specific reward is given seems to divide opinion. Overall, it is the method most respondents said they used frequently and it is more likely to be used as a primary method than an occasional method. That is, if people put a points system in place, they tend to exploit it to the fullest.

What might be viewed as the less “contaminating” methods – donations to a good cause and awarding enhanced kudos to the member through enhanced status or privilege – are less favored, much less so in the case of reward via status or privilege. This method has most relevance in the context of MROCs, where there are others to notice your enhanced kudos. It was the only method to be in the minority, with just a handful of proponents, which probably also reflects the relative status of MROCs in the mix – which we will explore presently.

Online survey quality control measures

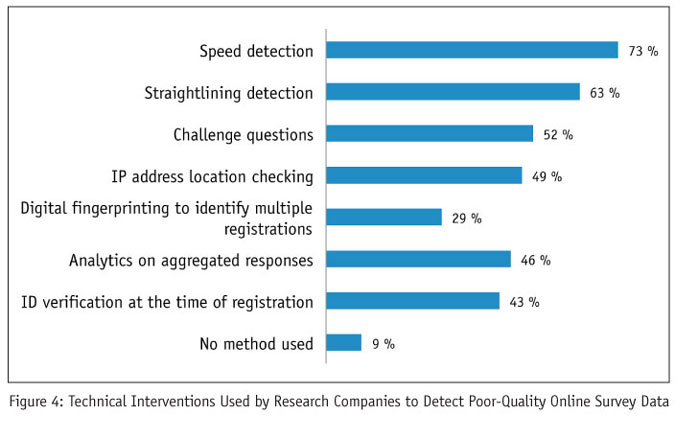

The aspect of incentivized panels which most concerns methodologists and industry bodies alike is their effect on survey quality. If people are lying to take surveys and scoop the reward, then it behooves the survey designer to be wary and set traps to detect them.

Curious to know the extent to which this was happening, we included a question on the quality-control measures that firms routinely apply to their surveys. As seen in Figure 4, the results show this is an area the industry is taking seriously.

Weeding out the hyperactives

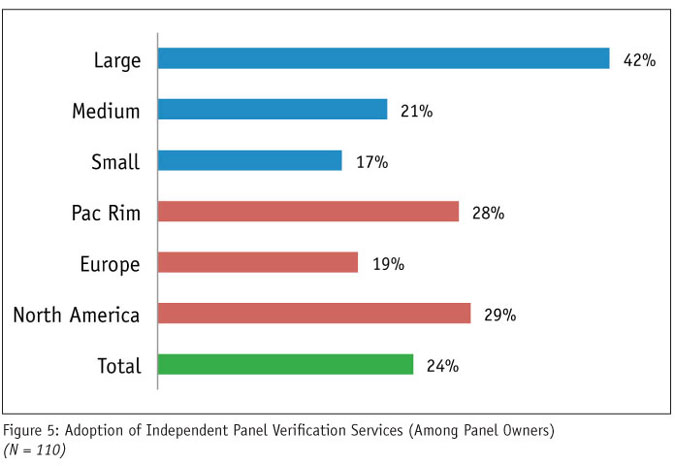

Digital fingerprinting can help to identify the more extreme professional respondents that CASRO and others are particularly concerned about – those who register multiple times and those who may actually be research bots rather than real people – but only within your own panel. Independent panel verification services that work across all the panels of those who subscribe to them provide the only viable cross-industry method to weed out these most damaging of fraudulent respondents.

We asked companies who operate their own panels (a subset of 110 of the 250 companies researched) whether they subscribed to an independent panel verification service (Figure 5). And for most, the answer is no. Overall, just 24 percent of companies use these services. Uptake appears to relate strongly to company size, with large firms more than twice as likely to use these services than smaller operators.

As these services rely on a subscription, cost may be a deterrent to smaller operators, but there could also be technical complexities to overcome, which are more difficult with companies with fewer technical resources in-house. Perhaps these services need to evaluate how attractive their services are to the smaller operator.

There was also markedly less uptake in Europe than elsewhere, for which we have no ready explanation beyond noting the observation.

Are surveys getting any shorter?

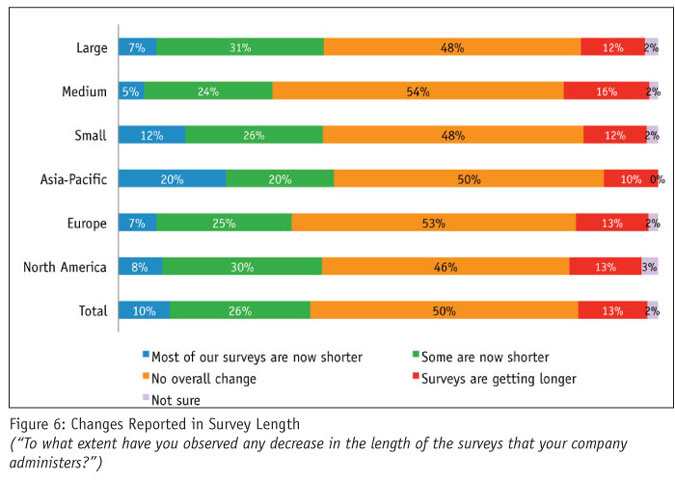

The debate over the viability of mobile surveys, as well wider concerns over participation and engagement in survey research, made us curious to see if this heightened awareness of survey duration was having any effect on the lengths of surveys being fielded (Figure 6). We asked whether surveys were getting shorter and, if so, what active steps were firms taking to reduce survey length.

It seems there is a weak trend toward shorter surveys, with one-in-three observing a reduction. But this has to be set beside the majority who see no change or even see them getting longer.

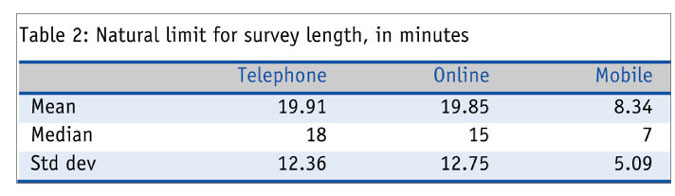

In a separate question, we asked respondents what they considered the natural limit of interview length for telephone, online and mobile surveys to be. The mean and medians for each show a fairly broad consensus over how long they should be – which we suspect is a lot shorter than they often are (Table 2). The median probably gives a better indication than the mean, as each question had a long tail of responses. Practitioners clearly recognize that mobile must be drastically shorter than either online or telephone.

As regards steps being taken to reduce the length, 50 percent cited policies or best practice guidelines, 42 percent said they were using survey logic or adaptive surveys to reduce the length delivered to individual participants, 24 percent will consider breaking up long surveys into smaller surveys (which many mobile practitioners advocate) and 21 percent are favoring semi-structured interviews instead of seemingly endless grids and batteries of scales. Thirteen percent pointed to “other measures,” but we don’t know what these are.

Attempts to limit survey length seem to be an aspiration still, rather than a work in progress.

A bright future for mobile apps

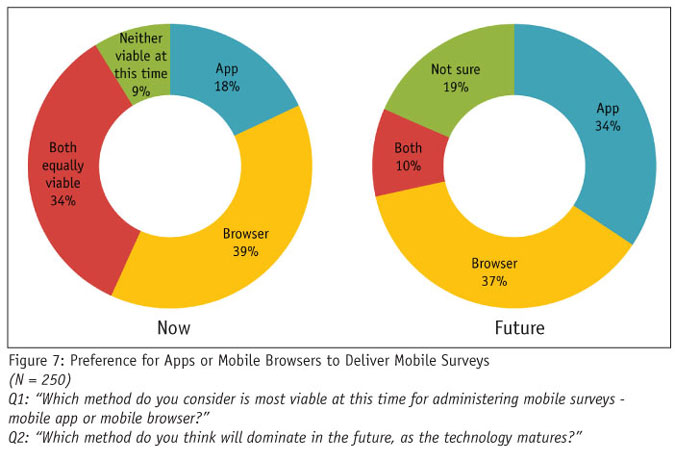

Mobile surveys continue to be one of MR’s hottest topics at industry events. For the technology providers, opinion appears polarized between mobile surveys using smartphone apps as the way forward versus building dedicated survey apps to exploit the full potential of the phone. For the casual survey taker, a browser-delivered mobile survey makes more sense. It requires a level of trust and probably too much effort to download an app onto your smartphone simply to take a one-off “quick survey.” Browser surveys, however, are limited in their ability to integrate the other features of a smartphone, such as capturing pictures or video or using reminders for diary surveys.

We did not explain these nuances to our survey audience but simply asked which approach they think is most viable today and which shows most promise for the future.

Looking at Figure 7, apps are definitely seen as the underdog at present. But those developing apps should take cheer from the survey’s prognosis for the future – where the number thinking apps are the way forward virtually doubles. Yet the number favoring browsers hardly changes – it is those who think both have merit that diminishes.

What this seems to demonstrate is, ironically, the opposite: that software of the future needs to be able to offer solutions to both camps. The app may be ascendant but the browser is not going away.

A continuing niche role for MROCs

The survey looked at communities in 2009 and established then that only 17 percent of firms operated communities, with a further 27 percent planning to introduce them and 56 percent stating they had “no current plans” for communities. We asked this question again for 2012 and the numbers have not increased. Just 16 percent state they operate at least one community, which is virtually unchanged. Only 18 percent have an intention to launch an MROC service, while 66 percent say they have no plans.

The same picture emerges from the actual number of communities operated by the minority that do: More than half run fewer than five and a very small number of firms operate a lot of communities. Our conclusion is that there are still not many communities out there.

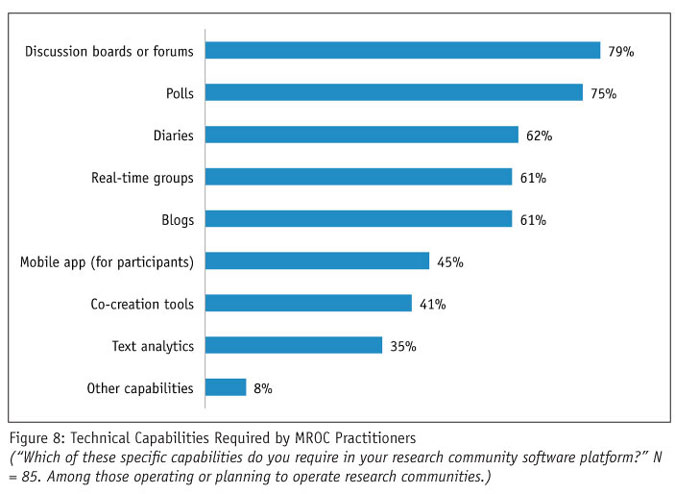

Communities are not as well-served with software solutions as conventional research so we were interested to know what kinds of features MROC researchers and operators needed (Figure 8). While there is clear consensus about the need for forums and polls, a substantial majority also want real-time groups, diaries and blogs in their toolkits. Almost half (45 percent) of those responding make a link between communities and mobile participation and want to see a mobile app for community members to use. Mobile apps are particularly useful in fulfilling another priority need: the diary survey.

In another question, we discovered that only 27 percent of firms use the same software to run their communities and their other online research or panels; 55 percent use dedicated commu nity software and 18 percent supplement online survey tools with specialist community tools.

Long-term trends on reporting methods

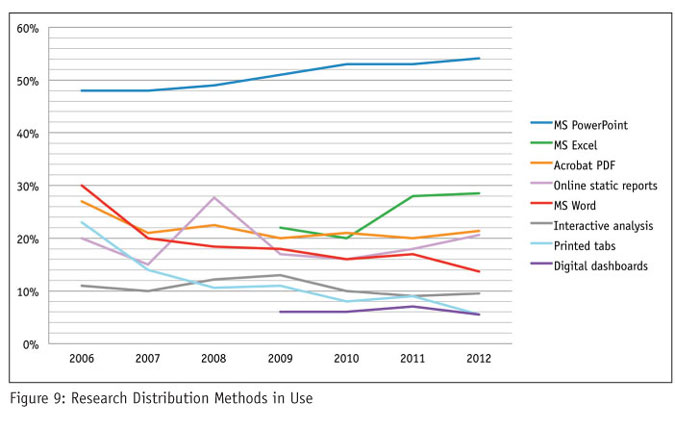

We ask our participants what percentage of their projects involve each of the eight principal methods of distributing research data or findings (Figure 9). For many years now, this study has shown that market researchers have a dependency on PowerPoint as well as other Microsoft Office products, which keeps other more advanced methods in the shade.

Overall, there has been little change at the high end – demand for digital dashboards, interactive analysis and online static reports remains fairly flat.

However, when we dig a little deeper into the data, for the first time in 2012, we see a glimmer of a possibility that some sections of the industry are starting to make more use of other specialized methods.

It is noticeable that in 2012 North American companies report making greater use of online static reports. In this region, 26 percent of projects are delivered in this way, compared with 16 percent and 18 percent for Europe and Asia-Pacific. It is also more prevalent among large companies (21 percent) versus small companies (a mere 8 percent).

A similar difference is apparent with dashboards. In 2012, large companies claim they are delivering 13 percent of their projects in this way, compared to 7 percent for medium-sized companies and 3 percent for small companies. It is a pattern often observed in this study over the years – that smaller companies find it more difficult to embrace many of the more technologically advanced methods.

The use of Excel seems to have experienced a recent surge. We consider this perhaps a sign that market researchers now think it more important to give clients reports that can be manipulated. However, this also raises issue of ethics and trust and the authority of the research company, as it relies on considerable care on the part of the user when using Excel not to manipulate the findings in ways that make findings unreliable or even misleading. Is this another triumph of expedience over efficacy?