Editor's note: Matthijs Visser is a principal at Advanis, an Edmonton, Alberta-based research firm.

Twenty-five years ago, every survey respondent’s primary real-time communication method was a landline phone. These days, respondents’ preferred communication methods vary enormously due to the plethora of devices and applications available to them. To list a few statistics: 91 percent of U.S. adults own a cell phone1; 55 percent of those own a smartphone2; 82 percent of U.S. adults use e-mail3; 81 percent of adult U.S. cell phone owners use text messaging4; 64 percent of U.S. households have a landline phone5.

With such a multitude of communication methods available, respondents have developed communication preferences that are unique to each individual. Even though a survey respondent may have access to e-mail, mobile and landline voice and text messaging, it doesn’t mean that they prefer to use each method equally. What if our research efforts could respect those preferences as opposed to dictating a single data collection methodology?

We hypothesize that providing respondents with different options for providing feedback results in the following benefits: an improved survey experience; an increase in the survey response rate compared to using a single survey methodology; sample that is more representative of the population.

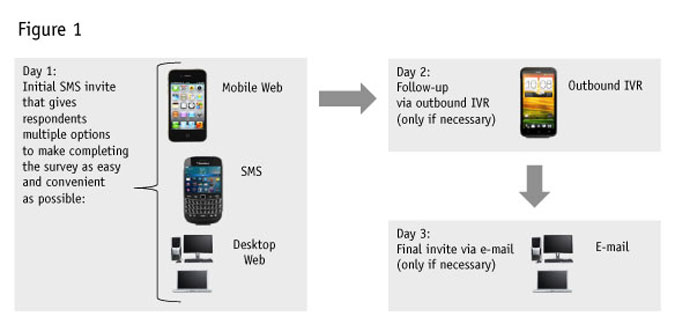

But is that in fact the case? To gauge the effectiveness of providing respondents with multiple options to complete the survey, Advanis, in partnership with our client, conducted a pilot on a large customer-experience study. Three methodologies were tested: IVR-only, SMS-only and letting respondents choose between SMS, Web and IVR, as demonstrated in Figure 1.

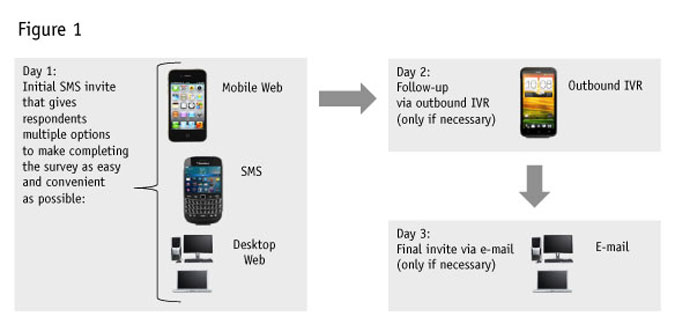

So, do respondents actually make use of each of the different feedback options, when offered? When we allowed survey respondents to choose their preferred feedback method, we see that all of the options provided to customers are being leveraged (Figure 2). No single methodology dominates, which demonstrates that respondents clearly appreciate the different options being provided to them.

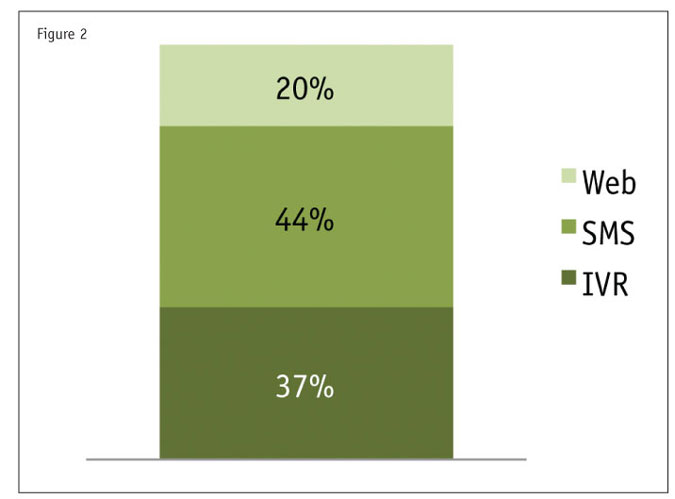

Did we see higher response rates when offering multiple options? Letting respondents choose resulted in substantially higher response rates6 compared to the IVR-only (+32 percent) and SMS-only (+54 percent) methodologies (Figure 3).

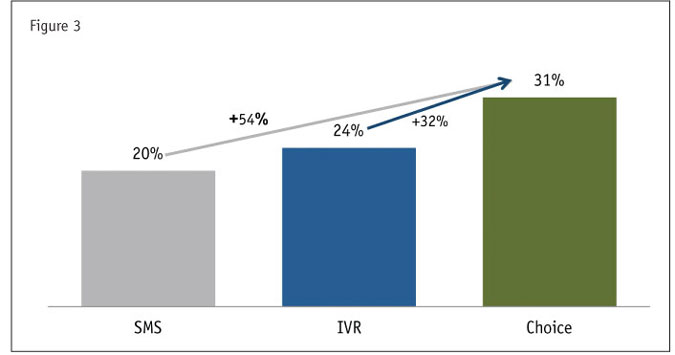

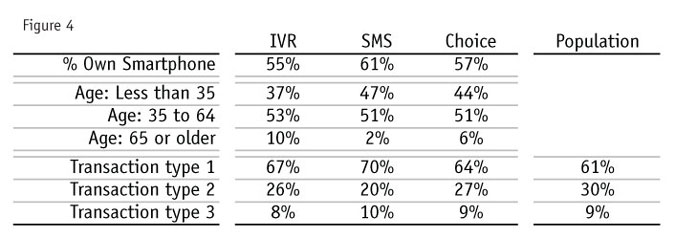

Are respondents representative of the overall population? Although population characteristics weren’t available for respondent characteristics, we were able to compare certain respondent characteristics across the three methodologies tested (Figure 4). Also, for transaction type, a proxy of the population distribution was available, allowing us to compare that distribution to the three methodologies.

When respondents were able to select their preferred methodology, phone type and age characteristics reflect a balance between the IVR-only and SMS-only characteristics. This is perhaps not surprising, since this methodology offers respondents both options (as well as to complete the survey online).

The transaction type distribution for respondents who were able to choose their feedback method most closely reflects the population distribution.

Result is positive

Our case study clearly demonstrates that when providing survey respondents with the ability to choose their feedback method, the result is positive. There is a substantial increase in response rates, while ensuring that sample remains representative. On top of that (and arguably even more importantly), the survey experience is made much more convenient and enjoyable for respondents.

The application of this approach extends beyond the methodologies used as part of the case study. Any combination of feedback options (CATI, IVR, e-mail-to-Web, SMS, etc.) can be presented to respondents based on the available contact information and the unique requirements of each individual study. For example, when applying this multimodal approach in partnership with another client, we attained a response rate7 of over 50 percent by using a combination of CATI and e-mail-to-Web.

References

1 http://pewinternet.org/~/media//Files/Reports/2013/PIP_Cell%20Phone%20Activities%20May%202013.pdf

2 Ibid.

4 http://www.pewinternet.org/Reports/2013/Cell-Activities/Main-Findings.aspx

5 http://www.ctia.org/advocacy/research/index.cfm/aid/10323

6 Calculated as completed surveys divided by all records contacted.

7 Again, calculated as completed surveys divided by all records contacted.