Of qual and quality

Qualitative research often gets a bad rap. It’s old-fashioned, it’s too basic, it’s not projectable, the detractors say. While there is some truth to each of those criticisms, qual has endured for decades and, if the results of our ninth annual Q Report survey are any indication, it looks set to do so for many more.

Similar to the 2017 Q Report, a focus of this year’s survey was tools and methods – their effectiveness; how they are chosen; what factors influence their adoption – and while tech-driven quant approaches acquitted themselves well, mobile qualitative notched noticeable increases in the percentages of respondents labeling them as effective or very effective compared to 2017.

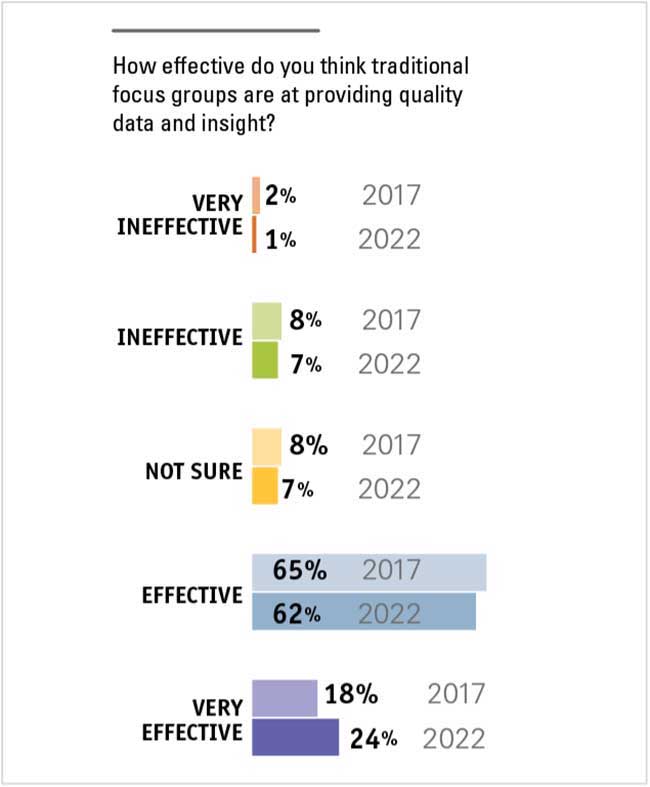

(The data is remarkably consistent across the five-year span; percentages for most other tools and techniques were within a few points of each other. Traditional in-person focus groups held their own, earning a slight increase in the percentage of effective or very effective, from 83% in 2017 to 86% in 2022.)

In 2017, a combined 67% said online qualitative/focus groups were effective or very effective. For 2022, that number rose to a combined 83%.

In 2017, combined 44% said mobile qualitative was effective or very effective. For 2022, that number rose to a combined 58%.

We didn’t delve into the reasons for these assessments but it’s likely that many 2022 respondents viewed the digital qual methods as lifesavers during the pandemic, perhaps turning to them out of necessity but obviously pleased with their efficacy and utility – especially against the backdrop of concern some respondents expressed this year over panel data, which can be tainted by fake respondents and other bad actors. To be sure, people can lie their way into focus groups and fail to give truthful answers during them but qual’s in-person nature definitely has a leg up on panel research’s black-box anonymity for those worried about who’s answering their research questions.

Along with delving into department staff levels, years on the job, skill sets and job satisfaction (see accompanying content for a deeper dive on those findings), we asked about: the biggest MR-related change they foresaw their organization making in the coming year; the effectiveness of traditional and newer techniques; how they stay up to date on methods and techniques; and areas of frustration with marketing research.

Predictability and stability

Innovation is certainly critical in any industry – marketing research is no different – but for as much as MR industry observers, vendors and VC-backed startups scream about the need for innovation, our study finds that researchers and their internal clients place much more value on predictability and stability when it comes to picking and using MR methods. And while new doesn’t necessarily equal scary, it does introduce an uncomfortable level of uncertainty when huge business decisions are being made, respondents told us.

If we can try new methods in a cost-controlled environment, that is preferable. We also need to make sure the new methods are going to allow us to meet business needs (i.e., getting the clarity of responses we need, adequate numbers of responses, credible data, respondents in the right demographics), so we vet them that way, too.

I consider what business and research questions we are trying to solve and consider new approaches as one of the options to address these. The importance and visibility of the objectives often dictate whether or not I feel we can take the risk of trying something new.

On top of or in addition to that, even if a tool or method seems to have great promise there need to be other forms of proof – a case study, a recommendation from a friend or peer, etc. – in order to make the leap.

I keep an eye out for new and proven research and analysis techniques via refereed professional journals, data science books and by maintaining close connections with academics in marketing, behavioral science and computer science. (Vendors are generally my least-reliable sources.)

I look at the evolution of the methodology. I’m interested in learning what its original purpose was and how much it was altered in time. In general I appreciate robust methodologies with a precise purpose, not multipurpose. No matter how innovative the methodology, I do not believe in one size fits all. I find it more effective to work with more tools/methodologies that complement each other.

Caution is the watchword

Also similar to 2017, we asked respondents to characterize their organizations’ tendencies when it comes to adopting new methods and the 2022 numbers are nearly identical. Caution still appears to be the watchword here, with 45% saying they are among the late majority and 16% placing themselves in the slow-to-adopt camp. These findings line up with years of observations from the various open-ends that indicate experimenting with new approaches for the sake of it doesn’t fly with readers’ internal audiences, many of whom are happy to rely on traditional methods they view as safe and dependable.

Beyond internal resistance, B2B researcher readers cited respondents themselves as an impediment to change:

Our research must be conducted among a very small B2B target market. The average age of the ultimate customer is mid-50s and many in the target market resist new technologies.

Our [main] industry is B2B medical professionals, so adoption of new tech is slower among our primary target.

Those working in heavily regulated industries are also often limited in their ability to easily switch to new approaches.

Cost, of course, is the No. 1 consideration and barrier to trying new tools, followed by a host of other factors: internal comfort with familiar methods; the chicken-or-the-egg problem of new tools’ lack of a track record (if nobody tries them, how can they prove their value?); and the horrors of procurement.

In part, we don’t think about these new techniques as much. In addition, it’s harder for our internal clients to agree to a new methodology given the uncertainty of the outcome; having never used it before, it’s unproven to them.

They’re so far behind in general market research adoption that even the most basic approaches are new to them. It’s a matter of getting them used to even the idea of research before jumping into the latest & greatest approaches.

I feel somewhat slowed down by connecting business needs with trustworthy outputs (and sometimes with new suppliers) and the fast pace my partners expect.

If I’m given full latitude to bring on my own vendors, I’m an early adopter. If the business wants to approve, everything slows down.

I wouldn’t want to rely on something new and unproven to make important business decisions. If something is proven to work, then I’d consider using new methods.

Limited by our horribly restrictive approved supplier list. There is internal marketing and insights support for innovation but trying new suppliers is a nightmare with contracts.

Another impediment, apparently, is old white guys.

Budget restrictions and old white guys making the budget decisions.

Number of decision makers from a certain era.

Old people leaving the company and new people coming in helps a lot. The older stakeholders just want to do what was done before and don’t like taking risks – even though one of our company values is bravery.

In lieu of full-blown pilot tests, readers cited a few useful strategies for assessing new approaches:

We layer new techniques into projects with core traditional elements to allow stakeholders to dip their toes into a method without putting all eggs in untested basket.

We use low-cost trials to help gain internal adoption.

While cost is understandably a primary influence on the choice to implement new methods, data quality, with its whopping 70% of “extremely important” votes, is clearly the main driver, with factors such as speed of deliverables, question flexibility, audience specificity and in-depth analysis of data earning high percentages of “very important” or “extremely important” assignations.

Biggest change

To this year’s question about the biggest MR-related change they saw their organization making, responses largely centered around staff sizes (hoping for expansions, bracing for reductions), shifts in tool and method usage (many mentions of doing more DIY, agile and AI-powered research) and adjustments to the internal view and use of the insights function.

There were more mentions than in past years of creating in-house/proprietary panels, likely due to the kinds of concerns about data quality and panel sample they expressed in answering a later question about areas of the industry that frustrate them.

And several indicated plans to add or enhance voice of the customer initiatives.

We are starting (and I do say “starting” loosely) to consider merging customer feedback with operational data as part of our new voice of the customer platform we are implementing this year with a vendor.

Some report leaning on vendors for more work since they can’t find in-house people to hire; others say they are reducing their reliance on vendors and bringing more work in-house, either due to budget cutbacks or the need for more control of project completion timelines.

We’re planning deeper dives into our current portfolio. The last few years have been very focused on innovation and new platform growth. Now we are very focused on platform efficiency and improving/maximizing what we already have and is working!

Leadership changes are also impacting internal research functions – mostly in a good way.

Executive support for organic growth (vs. acquisitive growth) is growing. As a result, we’re excited to see new product management roles open up that are dedicated to identifying innovative opportunities and we’re getting some really engaged champions behind marketing research initiatives. As a result, we’re seeing our requests for research increasing overall vs. prior years.

Our new CEO is placing more emphasis on voice of the customer and I expect the demand for our services to continue to build.

We have a new CMO in our division. She is promising to help get a team of four to support insights (up from just me).

We have had a change in leadership and they value vendors. We historically have done the majority of our research in-house and it’s likely that is going to get outsourced.

Most frustrating

Late in the survey we asked an open-end about the areas of marketing research that respondents find most frustrating and readers didn’t hold back. Falling response rates and data quality in general – panel data in specific – were top of mind for many.

Data quality from sample vendors. We regularly refuse/return nearly 50% of all gathered data as it is rife with fraud (as evidenced by open-end responses and failing red herrings). CPI for our target audience is typically $80+ and it’s just crap.

I’ve encountered some really awful sample over the past year. And that scares me because what I’m doing now (very expensive B2B research) requires top-quality participants.

Panel sample. It’s horrible. We all just accept that 15-30% of the sample is complete crap and maybe, with a little diligence, we can clean out most of that and be left with 5-10% that’s still crap, but we’re not sure which ones.

Sample quality has really gone downhill, despite security measures in place. I get so many questionable completes in my data. It’s really sad and frustrating.

Keeping response rates up. Moving outside of our current lists into respondent lists that are not our “core” is also difficult as it requires reviewing panels that are available through different suppliers.

Elsewhere, annoyances generally fell under a handful of categories, including internal pain points, tools and the industry overall.

Pain points

Internal clients who want a project yesterday but who delay the project by months after we’ve hired a vendor.

The focus on new technology and automation. The strength of MR is in people analyzing data in the context of business. What is being called AI can’t do that.

Old-school methods that my organization still insist on using (paper mail surveys!).

The amount of research that gets done that is unnecessary. We need to be better as an industry of saying no when research isn’t needed or won’t answer the question.

It’s the red-headed stepchild in most CPG organizations who at the same time say, “Everything we do starts with the shopper/consumer.”

Tools

Robust DIY survey platforms that are mobile-first. The good platforms are laptop-first, mobile as an afterthought and we need the exact opposite.

I hate cheap survey tools and anything that “democratizes” any part of the business. We need to remove self-service research by the untrained.

I would love to find a really great online survey tool that allows me to do full analysis too – they all claim to but I always end up pulling data out and analyzing elsewhere.

(1) Relying on modeling to describe/predict consumer behavior and foregoing actually talking to consumers. (2) Using NPS as the primary indicator of customer satisfaction/loyalty. (3) Effectively integrating text and voice analytics into analysis of customer perceptions (problems/opportunities).

Online surveys are our bread and butter. But response rates are reaching a point where they will no longer be feasible. Fortunately I built an in-house panel a few years ago and they still respond well. But I can’t use them for everything.

The industry

Endless new buzzwords – same challenge, just a new word to describe it.

Excel sheet-based surveys that force translators to twist sentences into pretzels to match English syntax.

As an industry we often oversell new research approaches as the next best thing to drive new business. We often do this at the expense of actually knowing the capability of the new approach and if it is stand-alone or needs to be used with more traditional approaches to get a full picture of the research. Based on the sales pitch, customers think the new approach will give them complete market clairvoyance only to be disappointed. I give you “big data.” It was oversold by the industry and we do not hear it in the marketing pitches anymore (it’s been renamed), but it is a great tool when the marketing firm and the customer begin the process with an actual question used to interrogate the data set.

[Marketing research] hasn’t marketed itself well enough compared to UX research, design thinking, etc., which make MR seem old and staid.

It really appears that the majority of researchers in the industry (or maybe just the most vocal ones) these days automatically jump on the latest bandwagon and ignore input/data that doesn’t agree with their beliefs. I think there’s a lot of data being missed from regular people solely to agree with their preconceived notions and narratives. It seems like the industry has become a herd of like-minded individuals instead of people who are willing to look for answers that they may not like or agree with.

Social media becoming the go-to just because it is easy to scrape the web and quickly produce results. It’s terrifying to see decisions being made at C-suite level simply by looking at social media. But because it is quick it becomes the go-to, irrespective of who the sample truly is.

Back to work

We didn’t ask any questions about it, but it was rather surprising to not find a single mention of COVID-19 or its impacts and aftermaths across the many responses readers gave us. And while it would probably be inaccurate to say the industry is over the pandemic, the comments this year have a “getting back to work” feel. Researchers are concerned about response rates and data quality – two core issues with a direct impact on what they do and the value they provide for their organizations. They seem satisfied with the tools that are available to them, especially the proven ones like traditional and digital qualitative, but, perennially understaffed and underfunded, are always on the lookout for ways to do more with less.