Small changes can make a big difference

Editor's note: Mark A. Wheeler is principal of Wheeler Research LLC, Bryn Mawr, Pa.

All moderators know that they should try to ask open-ended questions to avoid leading their respondents to particular answers. But in the real world of moderating, it is usually impossible to know just how much our style of questioning is influencing the responses that we’re hearing. On the basis of both research in behavioral science and from recent marketing research examples with clients, it’s clear that some small variations in the way we ask our questions are having oversized and potentially-biasing effects.

All moderators know that they should try to ask open-ended questions to avoid leading their respondents to particular answers. But in the real world of moderating, it is usually impossible to know just how much our style of questioning is influencing the responses that we’re hearing. On the basis of both research in behavioral science and from recent marketing research examples with clients, it’s clear that some small variations in the way we ask our questions are having oversized and potentially-biasing effects.

In this article, I will describe a decision-making heuristic called availability that can alter the way our respondents answer the questions that we put to them. Next will be a few examples from published research showing that how questions are framed will change our answers (and even our beliefs and intention to purchase). Then I will give a recent example with a client where we decided to change a key research question and began to hear different answers. The article will end with a discussion of how to ask questions that neutralize any potential biases.

Thought-leaders in behavioral science, particularly Nobel Laureate Daniel Kahneman, have described a long (and still growing) list of cognitive heuristics, or shortcuts, that guide our decisions and behaviors. According to the availability heuristic, when an idea quickly comes to mind (or is brought to our attention), it seemingly becomes more important than it was before. Similarly, if we are asked to think about some action that we might take, then we go on to believe that it happened more often in the past and is more likely to happen in the future. There are lots of relevant examples in the psychology literature, with obvious implications for what we do as marketing researchers. Every time we make an idea available to our respondents, the idea itself becomes more plausible and more likely to be accepted. Of course, this is what a leading question is.

Powerful impacts

Psychologists have shown powerful impacts of small wording differences in questions, even when people are talking about their own lives. Research participants were asked a number of things about their social lives (study by Kunda, et al., 1993). Half of the respondents started by answering the question, “Are you happy with your social life?” while others were asked, “Are you unhappy with your social life?” They then went on to talk about their social lives. Even though the two groups were treated the same except for a single word, those who were asked if they were unhappy went on to be 375 percent more likely to declare themselves dissatisfied and unhappy with their social lives. In this situation, the availability heuristic is closely related to the confirmation bias. Once a question has been asked, most people try to confirm the question rather than refute it.

Sometimes this general principle has been used in manipulative ways. The prominent social psychologist Robert Cialdini (2017) recounts how cult recruiters initially bring people into their groups. They may initiate a conversation with a target recruit by asking them if they are unhappy. This kind of question is not a neutral way to gain information – the recruiters are literally helping to establish an unmet need by having people focus on what is wrong with their lives. Cialdini describes how the answer to this initial question can progress naturally to further promotion and manipulation from the recruiter (e.g., “If you’re unhappy, would you like for us to help you?”)

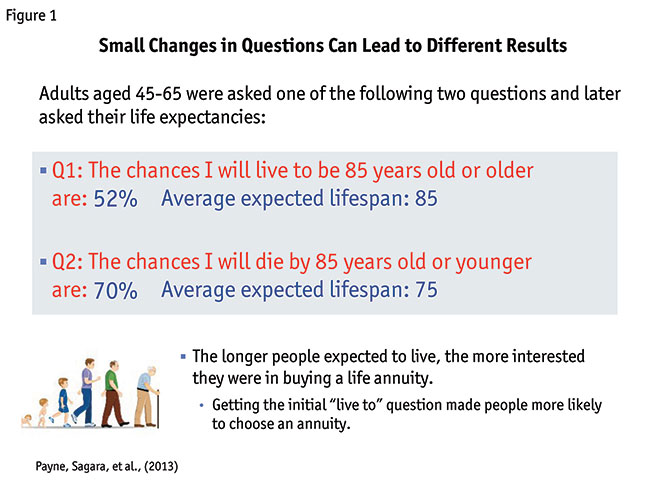

Moving back to the more ethical world of science and marketing research, some other behavioral scientists took these ideas even further, showing that small variations in language can lead respondents to give different answers – and then even to anticipate their future lives and future investments very differently (Payne, Sagara, et al., 2013). In a set of multiple experiments, the authors asked adults about their life expectancies – the critical variable again depended upon just a few words in the questions. In one of the key comparisons, respondents (who were 45-65 years old) gave ratings to the questions shown in Figure 1. Half of them were asked to estimate the percentage likelihood that they would live to age 85 or older, while the others were asked the likelihood that they would die by age 85 or younger. If the respondents could answer rationally, then the average answers for each question should be mirror images of each other.

But the mere thought of living to a certain age, or dying by that age, led the two groups in different directions and gave them two different kinds of thoughts. The “live-to” group gave an average estimate of 52 percent likelihood of living to at least age 85, while the “die-by” group estimated a 70 percent likelihood of dying by that age. When the authors looked at respondents’ expected age of death across all of their studies, they discovered that people who had answered “live-to” questions expected an average lifespan of 85 years compared to only 75 years for the “die-by” group. A very powerful effect from just a few words!

The researchers took this one step further and later asked the participants about their interest in buying a life annuity. A life annuity guarantees a people a specified level of income for as long as they live and is an attractive option for people who are worried about outliving their income. The drawback, of course, is that the income from the annuity stops when people die. Therefore, life expectancy has a massive influence on whether people should consider buying the annuity (i.e., if you think you will live a long time, you should buy one; otherwise, you may want to avoid this investment).

In this study, interest in buying an annuity turned out to be determined by how long respondents thought they would live – and also by whether they had been randomly assigned to the “live-to” or “die-by” group. For the half of respondents who had recently estimated that they would live longer, there was an average of a 39 percent likelihood to purchase the annuity, compared to 26 percent for the group who had not estimated that they would live as long.

The findings should make all moderators and marketing researchers wary about the impact of the availability bias and leading questions. Probably no one would have considered a question such as “What is the likelihood that you will live to age 85 or older?” to be a highly leading question but it is. This “live-to” phrase altered people’s perceived life expectancy and later made them much more likely to claim they would buy an investment. To put that into a different perspective, the gain in interest in life annuities (a jump from 26 percent to 39 percent) was probably a bigger boost than companies usually see from the entirety of their lengthy and expensive marketing campaigns.

Easy to add balance

Fortunately, there is way to minimize any potential problem with leading questions. It turns out to be easy to add balance to a question to prevent biasing respondents one way or another. There is little doubt that many of us who are moderators and survey designers do this sometimes – we should probably do it even more often.

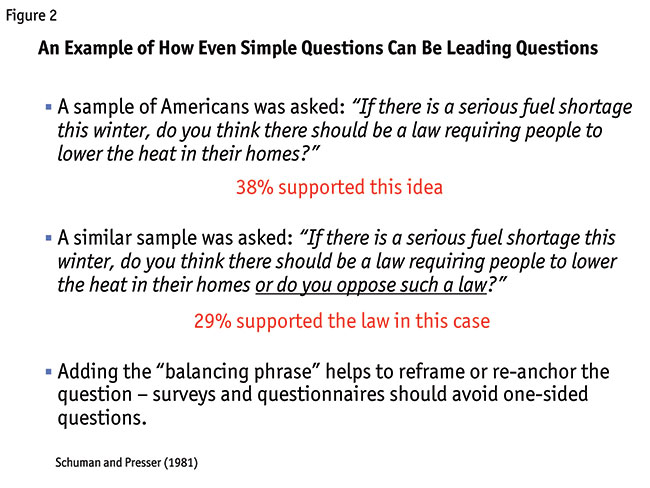

A good example comes from some work from many years ago. In a published experiment by the researchers Schuman and Presser (1981), respondents looked at some public-policy questions that reflected one of the concerns of that time: the energy crisis and the potential for fuel shortages. Two of the questions used in the research are shown in Figure 2. The first question asked if there should be a law requiring people to lower the heat in their homes; 38 percent agreed with the idea. In the second question, the researchers didn’t change the meaning of the query at all but merely added the language “or do you oppose such a law?” The additional phrase kept the question from being leading, as suddenly the options of being either in favor of the proposed law or against it were both made available. The percentage in favor dropped to 29 percent.

When learning about these examples, it is tempting to ask which answer is the “real” one – if we can frame questions differently and get different answers, then how can we know which answer is right? The resolution is pretty clear to behavioral scientists but may not be extremely reassuring to all of us – or to our clients. When people are asked novel questions, or questions that require them to imagine what they might do in the future, there is literally no preexisting correct answer. People in marketing research interviews (and also in real life) answer novel questions by constructing the answer at the time of their response. They consider (sometimes very briefly) what their best response should be and then they go with it. If we hear the top question in Figure 2, people think about possibly supporting the law. The bottom question stimulates us to think about why we should support and/or oppose it. Even though both questions would generally be considered to be fair and non-leading, the specific phrasing of the probe has an irrationally large influence on the answers.

Substantive differences

Even experienced client and marketing research teams can benefit from becoming sensitive to leading their respondents and including balancing phrases in their questioning. A recent marketing research project with a health care client provided a relevant example of how minor variations in questioning could lead to substantive differences in the key takeaways from research. (Note: To protect client confidentiality, there are a lot of details altered in the example below. The key points are accurate but the superficial details have either been changed or replaced by generic language.)

The client was interested in moving forward with plans to develop a new medicine for a very serious disease state. An existing option, here labeled as Product X, was first in its drug class and was usually judged as an effective medicine. It also required patients to self-inject the medicine at their homes. The new drug option that was being developed by my clients operated with a similar mechanism of action but was an oral formulation. In a prior round of research, before I became involved, the brand team had determined that self-injection was a barrier to use of Product X and that there was an unmet need for a similar medicine with a friendlier formulation.

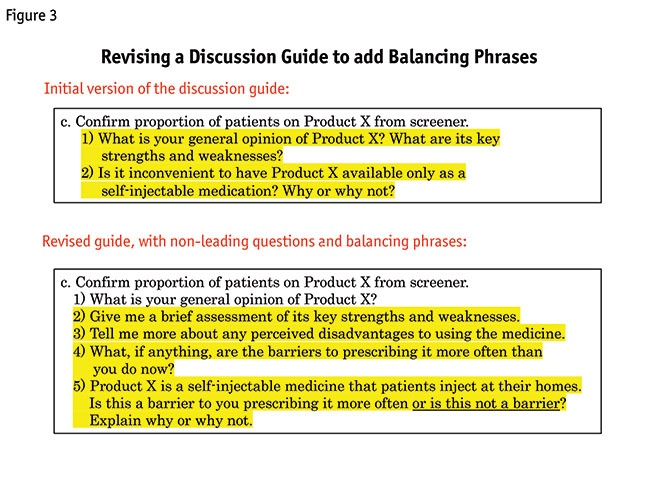

The new marketing research involved a number of different objectives but one key goal was to further explore the unmet need regarding the formulation. I was given the discussion guide from the prior research, which included the questions at the top of Figure 3. The guide followed a pretty standard approach – the key questions began with open-ended questions (e.g., “What is your general opinion of Product X?” “What are its key strengths and weaknesses?”) before the key question about self-injection. But, the key question here could reasonably be considered to be leading because it brought up the possibility of self-injection as a barrier to prescribing, without a corresponding balancing phrase.

I discussed this with the client and suggested some other ways to probe and explore the same issues with different language. We arrived at the questions at the bottom of Figure 3. These probes again started open-ended (e.g., “What is your general opinion of Product X?”) and slowly became more specific, to see when, if ever, doctors began discussing self-injection as a barrier to use. On the key question (c5), the mention of self-injection includes a balancing phrase so that doctors could choose to describe that as a problem – or also to consider that it wasn’t a big problem.

By changing the probes from the first study to the second, the overall significance of self-injecting Product X also changed and it became much less important to doctors. (If you are curious, the screening criteria were the same for the two research projects and the two phases of research were separated by only a few months.) In the initial study, doctors discussed the requirement that patients had to give themselves shots at home and they talked themselves into this being a significant issue surrounding whether to prescribe Product X. In the second project, doctors discussed all barriers to prescribing and they were offered the possibility that self-injection was not a major concern. Here, self-injection fell well outside of the top four existing barriers to using more of Product X. Instead, clinical concerns including efficacy and tolerability were considered to be much bigger factors in their decision-making. The newer version of the probes still allowed for a chance for doctors to discuss issues around self-injection but this discussion turned out to be more balanced than before.

To my relief, the clients were very happy to hear doctors’ perspectives following this new set of balanced probes. It gave them a stronger understanding of challenges related to launching a new oral product. (And of course, there are probably some client teams out there that would prefer to have more positive answers from semi-leading questions. But most teams spending money on research are looking for a candid picture of whatever is really going on.)

Doesn’t sway respondents

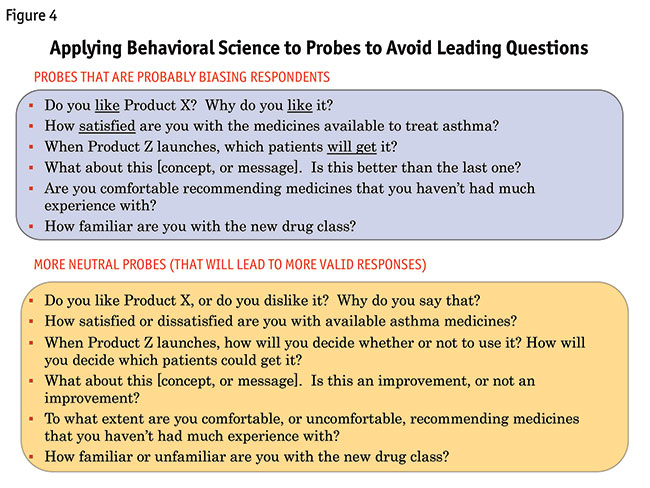

The strongest research questions, for both qualitative moderating and online quantitative surveys, will involve balanced probes rather than probes that only ask about one possible outcome. Some examples of both non-balanced and balanced questions are shown in Figure 4. The logic behind the balanced questions isn’t very difficult to follow. Rather than asking about something (e.g., an attitude, a decision, a future behavior), you ask about that thing and also its opposite, so the momentum of the question doesn’t sway respondents in one particular direction.

When I look at a discussion guide before moderating, I will quickly do a review of the questions and make a note of any probe that could nudge respondents toward affirming anything that is mentioned in the questions. (And yes, I also do this for guides for which I have written the first draft.) I will then either alter the guide or, when that isn’t the right approach, I make a note to actually ask the question with an added balancing phrase. This quickly becomes second nature. Also, for what it’s worth, clients are perfectly happy with this approach and they quickly understand why the added language is valuable even though the new phrases do admittedly make each question a little longer.

Behavioral science tells us the situations that should be the most vulnerable to the availability bias and leading questions. People are most influenced by decision-making heuristics under conditions where the questions are novel or difficult or if we are asking about outcomes that are uncertain. In other words, we probably don’t need to use balanced questions when we ask for opinions about bureaucracy or ice cream or Donald Trump. Everyone already knows what they think about these topics. But in marketing research we pride ourselves on asking the novel and insightful questions and, paradoxically, these are the situations when we may most liable to unintentionally lead our respondents in a certain direction. With a novel or difficult question, respondents often construct their answers only after considering the question and this is when the wording matters the most.

References

Cialdini, R.B. (2016). Pre-Suasion: A Revolutionary Way to Influence and Persuade. New York: Simon & Schuster.

Kunda, Z., Fong, G.T., Sanitioso, R., and Reber, E. (1993). “Directional questions direct self-conceptions.” Journal of Experimental Social Psychology, 29(1), 63-86.

Payne, J.W., Sagara, N., Shu, S.B., Appelt, K.C., Johnson, E.J. (2013). “Life expectancy as a constructed belief: Evidence of a live-to or die-by framing effect.” Journal of Risk and Uncertainty, 46, 27-50.

Schuman, H., and Presser, S. (1981). Questions and Answers in Attitude Surveys. New York: Academic Press.