Editor's note: Graham Page is executive vice president, commercial research and innovation at iMotions, with more than 30 years of experience integrating biometric and behavioral measures in advertising research. During his time at Kantar, he led the team that built many of the brand and advertising research frameworks used today by advertisers worldwide.

At recent market research conferences, one theme has stood out: commercial research has moved from fearing AI to figuring out how to use it. A year ago, the mood was anxious. Now, those same people are being more pragmatic. The industry now sees clearer paths for adopting AI tools but one of the most hotly debated applications remains the use of synthetic data.

AI is already reshaping research in four major ways:

Moderation tools: Using large language models (LLMs) to design and even conduct interviews.

Operational agents: Automating workflows to cut time and cost.

Automated analysis: Using AI to code open-ended responses, extract themes and visualize findings.

Synthetic data: Generating new data points based on patterns from real responses.

Each approach carries pros and cons but synthetic data is one that perhaps most challenges the core purpose of research: understanding real human behavior.

Synthetic data has already had a positive and powerful effect in industries like automotive, health care and finance, where it enables better AI training for rare occurrences. It enables safe crash simulations, privacy-preserving medical research and better fraud detection. In market research, however, the challenge is different. Instead of predicting physics or patterns in medical scans, we’re trying to understand capricious human behavior. Still, synthetic data is being used in three key ways today:

Creating personas: LLM-powered “synthetic audiences,” based on current and past research, that can be queried in natural language to explore how different consumer types might respond.

Filling gaps in data: Estimating missing survey responses using patterns from previous participants or similar studies.

Boosting sample sizes: Generating digital twins of hard-to-reach participants to strengthen small-sample studies.

The appeal is obvious. Recruiting real participants takes time and money. If AI can expand or enhance datasets, insights can be generated faster and more efficiently. But that efficiency comes with trade-offs.

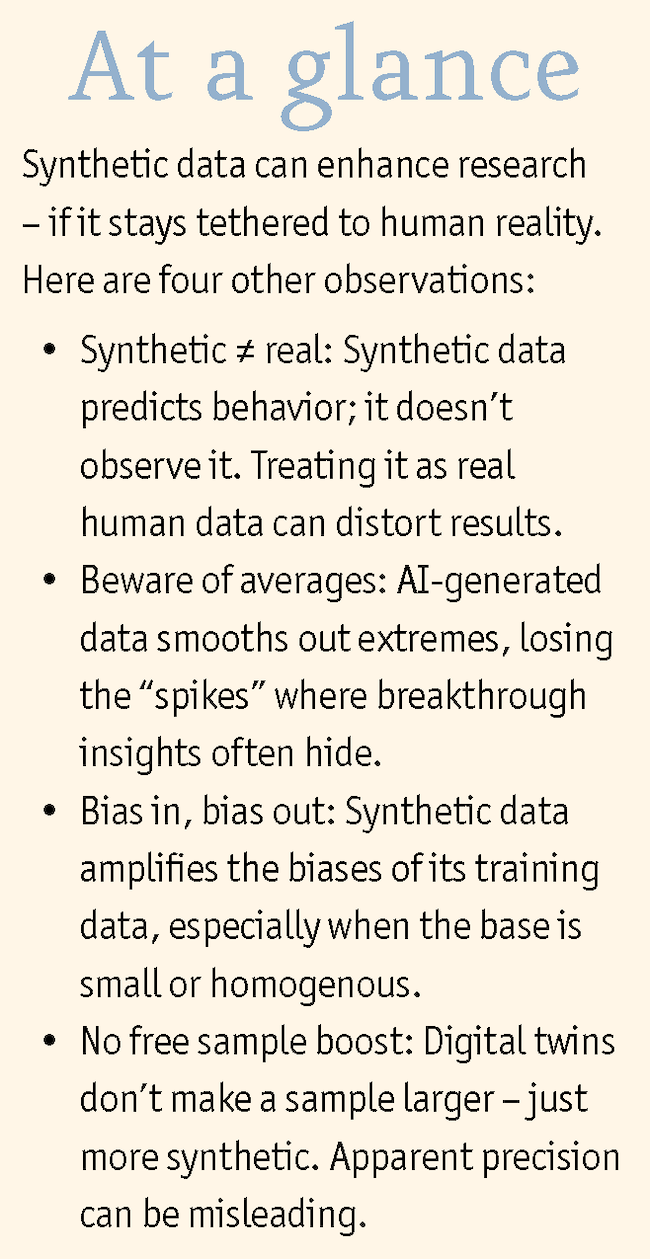

Obviously, synthetic data isn’t real data. And that distinction matters. It represents predictions, not observations, so treating it as equivalent to human data is potentially misleading. Updated industry guidelines rightly emphasize transparency when using or reporting synthetic data.

Here are five issues researchers should bear in mind:

Regression to the mean: Synthetic data tends to produce “average” responses and tends to smooth out the highs and lows, which is often where the most interesting insights lie, especially when testing new products or ads. For example, in our testing, predicted eye-tracking data shows strong center bias. It may be accurate on average but misses nuance at the edges that real human data reveals.

Weakened relationships: Small estimation errors accumulate, diluting correlations between variables like brand perception and purchase intent.

Bias magnification: Synthetic data inherits and amplifies biases from its training data. The smaller or less diverse the base data, the greater the distortion.

No statistical magic: Digital twins don’t increase the real sample size. Thirty real participants remain 30 participants, even if the model based on them generates 170 more. The answers may seem more precise but they are no more generalizable.

Cost misconceptions: Generating high-quality synthetic data can be more expensive than collecting real data. AI may be faster but it’s not always cheaper.

Synthetic data has enormous potential to make research faster and more accessible but only if harnessed responsibly. That means:

Be realistic: Clearly distinguish between real and synthetic data in every report. Treat augmented results with appropriate caution.

Be pragmatic: Use real people when exploring new ideas or creative concepts. Synthetic models are more useful for iterative optimization or when speed matters more than accuracy. For example, use human testing to validate a new ad campaign concept but deploy AI to test the hundreds of executional iterations necessary in modern media plans.

Keep feeding the machine: Synthetic data can’t replace real data as the training source. Models trained on their own output will drift from reality, “choking on their own exhaust.”

Keep listening to humans

The arrival of generative AI is a transformative moment for market research. Synthetic data can augment our tools to accelerate insights and scale experimentation but it cannot replace the messy, emotional and unpredictable input of real people. Used transparently and in the right contexts, synthetic audiences can expand what’s possible in research. But to keep our insights real, we also have to keep listening to humans, not their digital echoes.