Will AI replace respondents in health care marketing research?

Editor’s note: John Friberg is vice president at Healthcare Research Worldwide (HRW), a global health care research agency.

Among the many use cases proposed at conferences and in articles by industry personnel over the past year, one such idea has become particularly disruptive – that we will be able use ChatGPT and other such open AI platforms as a source of data collection, rather than just a tool to aid in the collection process.

In other words, some within the marketing research industry suggest that ChatGPT will soon serve as a well-informed, fully qualified market research respondent, able to talk to our moderators about an endless list of research topics.

How realistic is this proposition? Will there be some types of market research where AI can replace respondents and other types where it cannot?

How comfortable are we with AI-generated health care information?

There currently exists a topic “ceiling,” beyond which many of us are no longer comfortable relying on the advice of a generative AI platform.

Try asking yourself the following question: What wouldn’t I take ChatGPT’s sole word on?

That answer often includes examples related to the health and well-being of ourselves and those we love. For these decisions, we usually want to consult with a fellow human, preferably one with training and experience in a relevant field of expertise.

It’s likely that, as more studies are conducted and the results are publicized, trust in AI as a source of health care-related information that we would normally get from our doctor will increase, and our AI “ceiling” of comfort will rise.

For now, many patients feel that the health care information being provided by AI platforms does not meet the standard of reliability necessary in real, clinical practice.

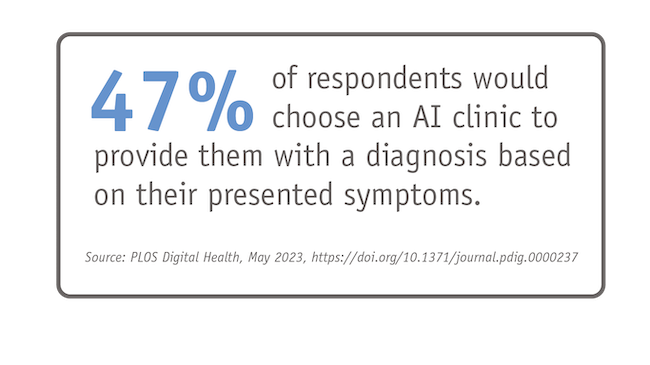

According to a recent study funded by the National Institute of Health and conducted by the University of California, San Francisco, researchers found that respondents were still almost evenly split between choosing a human doctor (52.9%) or an AI clinic (47.1%) to provide them with a diagnosis based on their presented symptoms.

It’s this same sentiment that makes health care market research a particularly difficult nut for the robots to crack. Our business objectives often deal with serious conditions, which can limit the degree we are comfortable relying on AI as a source for insights.

Health care marketing research: Medical expertise and AI

In addition to the gravity of subject matter, health care is a nuanced and complex field that continues to change and evolve every day.

Overall, ChatGPT has shown immense strides in its ability to talk about medical-related topics.

A promising study in the February 2023 issue of PLOS Journal of Digital Health reported that physicians associated with Beth Israel Deaconess Hospital were able to successfully use ChatGPT to pass all three steps of the United States Medical Licensing Exam (USMLE) without the platform undergoing any additional training or reinforcement on the contents of the exams. The USMLE is a set of three standardized tests of expert-level knowledge that are currently required for medical licensure in the United States. By passing each of these three tests, a significant milestone was achieved in the progress of AI and large language models, which speaks to the potential of this technology moving forward.

As of the writing of this article, however, there are still limitations built into the ChatGPT platform that restrict access to information made available after December 31, 2021. OpenAI, the company behind ChatGPT, has addressed this limitation for premium subscribers of the platform by integrating Microsoft’s Bing search engine – but generally speaking, this limitation on the processing of recent information means that a response from ChatGPT cannot be considered as a fully contemporary understanding, despite its success in general physician qualification testing.

ChatGPT and similar platforms also work with publicly available data – of which individual health records are not included. For now, ChatGPT has access only to medical texts, clinical trials, research papers and other sources of medical information.

As such, there’s no true “primary source” information – i.e., patient records – for ChatGPT and other AI platforms to learn from. These platforms operate using information in aggregate, rather than learning on an individual, patient-by-patient basis. As any doctor would be happy to tell us, every patient is different, and there are often certain intangibles that a doctor must account for that often come through years of real-world experience. This area of expertise is difficult to replicate.

By dealing only in aggregated sources, we also inevitably lose the nuance and individuality of health care providers themselves, which can be reflected in topics like treatment approach, for example. This is also to say nothing about differences inherent across practice settings or geographic locations that are made clear using sample-based research methods.

The health care industry recognizes the potential that is inherent in these tools and acknowledges the ways in which access to clinical health records can aid in AI’s ability to provide reliable and accurate medical advice. For this to happen, serious problem-solving is underway to begin to answer the questions about how to maintain patients’ privacy and confidentiality while at the same time being able to evolve and improve on the information that these tools can provide. Ultimately, the vast wealth of individual patient data that is being collected every day by doctors, hospitals, apps, phones and so on, if made available to these platforms, could empower the model to deliver more precise responses to the clinical questions posed.

Applications for generative AI in patient marketing research

For now, it seems that we’re much more likely to get useful AI-generated insights when it comes to patient research, where emotion and experience can be modeled with some degree of success, without also requiring an understanding of medical science and having the experience of medical practice.

AI tools are often able to mirror the behavior of a human in a given situation. A basic example can be given in the way that most of us choose to engage with an AI platform like ChatGPT – we ask the chatbot a question, and the chatbot responds to the best of its ability, just like two people would do in conversation.

The potential for AI to accurately reflect human behavior extends beyond this back-and-forth chat exchange. Another example includes the way in which we visually engage with an advertisement. Specifically, we’re talking about the areas of an ad that are looked at first, the time spent looking at each element of an ad and the direction of eye movement from one place to another across the page.

At our research agency, we have adopted an AI-powered visual prediction algorithm tool, informed by hundreds of previously conducted eye-tracking research studies and validated against many of those previous projects. Using this tool, we can understand the visual engagement of various communication concepts without any actual human participation in eye-tracking exercises.

Beyond the more concrete realm of behavior, we’ve also shown AI to comment on human experience in fairly sophisticated ways, specifically focusing on the patient experience across many different conditions. While this is a topic for another day, our initial experiments are positive!

ChatGPT is not a stand-in for human respondents

It's important to note that while ChatGPT can be a valuable tool in health care market research, because of the reasons listed above it cannot serve as a stand-in for human sample.

In addition to an up-to-date understanding of their medical specialty, health care professionals possess an emotional and social understanding of their patients that can be equally important to our market research outputs.

ChatGPT also provides what can be considered as a single viewpoint that is developed only via aggregated sources – yet it is often in the conflicting viewpoints of physicians where we find our strongest insights.

Researchers should always validate the outputs generated by ChatGPT and consider its limitations.