A more informed process

Editor's note: Linda Naiditch is assistant vice president at Mathew Greenwald & Associates, a Wash., D.C., research firm.

You don’t know what you don’t know. This truism often lurks in my mind as I am designing a questionnaire. I feel it is my job to ask just the right questions so that my clients can obtain the information they need. But I can’t be sure if I have hit the mark if I do not know how respondents will understand and interpret my questions. Perhaps the best way to illustrate my concern about the unknown is to ask you to imagine what would happen if:

-

respondents don’t see an answer that reflects their thinking in a list of response options to a particular question;

-

a listing of attributes does not include all of the factors that would be relevant to the client’s objectives;

-

respondents think a term means something different than what you intended;

-

respondents completely misunderstand the thrust of the question; or

-

you just didn’t think of something and leave out what would be an important component to the study.

Well, the simple answer is that the results you deliver to clients would either be incomplete or based on suboptimal data that you thought was just fine.

Researchers currently use a variety of methods to try to avoid these problems, including: having research colleagues review the draft questionnaire; conducting qualitative research before moving on to the quantitative research; and conducting and monitoring pre-test interviews. Each of those methods can help. But even when we use all three methods, our questions can still be off-target, incomplete or worded in a way that is not effective because there is still something that we don’t know.

Research colleagues who help us with their expert review can often see gaps and help fill them in. But we and our colleagues often have very different life experiences from the subjects who will be completing the survey, so we cannot think like them or imagine every situation that may apply to them.

Preceding quantitative research with qualitative research is very helpful in guiding the development of survey questionnaires. But when we have finished our draft questionnaire, we still do not know if we have hit the mark.

Telephone pre-tests have the potential to reveal a variety of issues but their effectiveness is dependent on respondents being willing and able to communicate their misunderstanding to the interviewer and on the researcher being extremely sensitive to hesitations and other subtle signals of problems. We cannot count on all respondents cuing us to problems in the questionnaire – they may even be unaware that their understanding of the question is different from what was intended.

We see its value

Where does that leave us? With the need for one additional tool: cognitive interviewing. Our firm has used this approach for a number of years and every time we use it we see its value in making sure we are measuring everything we want to measure and that we truly understand what respondents have told us as we analyze the data.

Cognitive interviewing is a specialized type of pre-test that focuses on respondents’ thinking process as they hear or read questions in a survey. It actively delves into how they interpret the meaning of questions and possible responses, what they think about when they are considering how to answer, how they decide on their answers and what their answers mean.

In our firm we have conducted cognitive interviews over the telephone and in person for surveys that will be administered by telephone as well as self-administered online surveys. Our methods are primarily drawn from Gordon B. Willis’ Cognitive Interviewing: A Tool For Improving Questionnaire Design. These methods include:

-

asking respondents to rephrase the question, or the response options, in their own words;

-

asking them to tell the interviewer what they are thinking as they consider the question, consider their answer and decide upon their actual response;

-

asking respondents what specific words or phrases in selected questions mean to them;

-

asking how easy or difficult a question is to answer and if it is not easy, to probe for the causes of difficulty;

-

looking for any cues that may indicate an issue, including hesitation or information provided in one question that seems to conflict with the information provided in another.

In addition, we sometimes ask respondents how they would answer a pre-coded question before they have seen the response options, to ensure we have presented all relevant categories. We may also repeat a question with slightly different wording later in the questionnaire to see if it elicits a different response. If we hear responses that seem inconsistent, we probe for the reason why. We observe and listen for nonverbal cues that a respondent is having difficulty or is confused.

A few examples of cognitive interviews that we conducted on a recent publicly-released survey about nutrition illustrate their value. In a survey about nutrition, one trend question asked respondents to rate how much impact factors such as taste, price, healthfulness and sustainability have on their food selection. With our understanding that “sustainability” can connote ecological, economic and social aspects of food production and sales, and knowing that a surprisingly high proportion of the population reported in a past study that it significantly impacted their food purchase decisions, we decided to explore the concept in our cognitive interviews.

A young woman named Angie explained that, to her, sustainability meant how long food would remain fresh if she put it in the freezer. Similarly, a middle-aged male thought it related to a food’s shelf life. With such different meanings to different people, the term would not be useful.

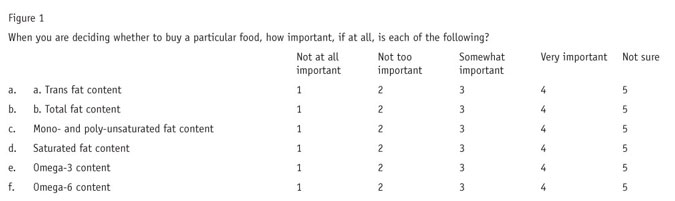

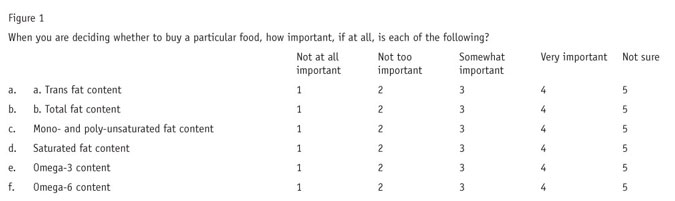

Later, in a cognitive interview with a middle-aged man named David, we posed the question shown in Figure 1 from the same draft questionnaire.

In explaining his answers to this question, David noted that some of the food components listed are good to include in one’s diet, such as omega-3s, while others such as trans fats and saturated fats are bad. When we initially drafted the question, we expected it would measure how much weight, if any, respondents placed on these ingredients, regardless of whether they are good or bad for one’s health. David, however, chose the “very important” option when he wanted to indicate that a food component was a good one that he sought to include in his diet and “not at all important” to convey the component was something bad to be avoided.

David’s thinking prompted us to revisit this question in depth with the client and we ultimately separated the question into two parts. The first asked yes/no whether the respondent considered whether the foods they purchased contained these types of fats, then a follow-up question was posed to learn whether the respondent was seeking to consume or avoid each one.

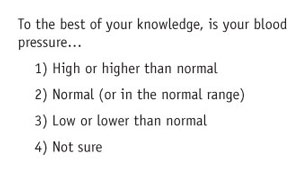

Finally, one more example from David’s interview.

David responded to this question with a question to us: “How should I answer if my underlying blood pressure is high but it is normal because of medication I take?” That was a forehead-slapping moment where we knew we needed two versions of the “normal” response option – one “without medication” and one “with medication.” The revised question turned out to be very useful in analysis of differences between subgroups of respondents.

Flashes of insight

These are just a few examples of ways in which cognitive interviewing can help improve a draft questionnaire. The interviews help immensely in the refinement of what you have down on paper already but they also sometimes give you flashes of insight, golden nuggets of understanding that result in changing your approach to a given line of questioning. In particular, cognitive interviews offer the following benefits:

-

They can reveal when you are off-target in your inquiry.

-

They can identify when you are missing a dimension that is integral to respondents’ thinking.

-

They help you word questions and response options in a way that is meaningful and unambiguous to respondents.

-

They reveal when respondents have underlying assumptions that affect their responses and suggest ways to address the issue.

-

They help you write questions so that they can be answered more easily and more accurately.

In addition to helping you improve your questionnaires, cognitive interviews provide objective evidence that helps market research firms to back up constructive criticism of client-worded questions.

Just a handful

If you are considering trying out cognitive interviewing, you likely will be wondering how many interviews one needs to conduct. The good news is that just a handful can often be extremely impactful. We have found that as few as five make a big difference. However, your chances of catching all the problems with your questionnaire rise in linear relation with the number that you conduct, according to a study outlined in “Sample size for cognitive interview pretesting” in the winter 2011 Public Opinion Quarterly. Willis himself recommends three rounds of cognitive interviews, each with 10 interviews.

The bottom line is that whenever a project timeline and budget allow, we try to include cognitive interviewing as part of our proposed questionnaire design process. We like having this technique in our toolbox and we think if you try it, you will too.