Editor's note: Rick Kieser is CEO of Ascribe, a Cincinnati software firm.

Voice of the customer (VoC) and customer experience management (CX or CEM) programs are critical keys to gaining and sustaining customer engagement and loyalty. There is no shortage of data available to analyze – customers are talking more than ever and the market research industry is rich with ways to listen, collect and understand what they are saying. With the technologies and providers currently available, companies can transform disparate feedback channels for customer comments – both solicited and unsolicited – into timely, actionable insights.

There are growing numbers of players investing in rules-based text analytics and combining them with survey technologies to create “platform solutions” with promises of one-stop-shopping for collection, analysis and application together with cost savings. There may be benefits but there are also important and sometimes obscure trade-offs. Fulfillment of the platform promise is deceptively difficult. Locking in to a singular text analytics technology requires the company to conform to it, rather than applying the right point solution at the right time customized to the company’s situation and data. There are important considerations and options that should stay on the radar for optimal efficiency and effectiveness.

Just as CX/CEM software has reached a level of maturity, so too has software for text analytics, mostly based on an underlying set of techniques termed natural language processing (NLP). The predominance of this method in commercial software risks overshadowing two complementary text processing methods, which are equally relevant and in some instances are more appropriate in handling very large volumes of feedback data. One is machine learning, an artificial-intelligence approach that learns how to categorize and interpret text from samples previously coded. The other is semi-automated coding, an auto-assisted method that organizes the work intelligently and optimizes human decision-making. (See my article “Navigating the new data streams” in the January 2014 issue of Quirk’s for more on NLP, machine learning and semi-automated coding.)

In a presentation given at the Orlando Confirmit Community Conference in May 2014, Gartner’s Jim Davies estimated as many as 290 providers in the CEM space, with a handful claiming they can do it all, but warned that in CEM, no one solution can do it all.

Set against the backdrop of different – and often disjointed – customer insight initiatives and customer feed-back channels, companies can now start to build highly effective technological solutions to integrate feedback, provided they fully evaluate their internal needs and do not settle for suboptimal or limiting technological solutions. With an integrated point-solution approach, the results can be very valuable and a full payback should be expected within a single fiscal cycle, not to mention the long-term upside, demonstrated in detail in this article.

Flexibility is lost

In an emerging world of one-stop-shop platforms, it’s tempting to default to solutions rooted in the traditional core of survey administration and the lure of basic low-cost-per-comment text analytics. There are certainly scenarios in which the selected methodology built into a given platform will be optimal at a given point in time. But there’s a flip side – often what’s lost is flexibility to mesh with existing processes or to grow with the organization, so ultimately what originally appeared to be a convenient or cost-saving solution is only truly efficient in a narrow or limited context. To evaluate your specific situation, consider a very specific set of criteria with four critical components: volume, complexity, cost and maturity.

First, examine the volume of comments you are (or expect to begin) receiving. Volume drives productivity of both human involvement and technology and therefore it is a significant cost driver. To create representative scenarios, we use a four-tiered scale of low, medium, high and very high volume, using the following threshold markers:

- Low: 10,000 customer responses per year

- Medium: 100,000 customer responses per year

- High: 500,000 customer responses per year

- Very high: 1,000,000 customer responses per year

Next we look at complexity based on codebooks (classification hierarchy of customer comments, often referred to as classifiers, taxonomies or codes) on a scale of simple to advanced. Coding is the underpinning of customer experience insights, because it enables insight generation from classified customer responses. For automated technologies, the codebook complexity will determine how much it costs and how long it takes to get a solution to be operational. Thus, complexity drives quality of results along with productivity and accuracy of cost assumptions. For scenario analysis, we classify complexity as follows:

- Simple: 50 classifiers/codes

- Intermediate: 250 classifiers/codes

- Advanced: 1,000 classifiers/codes

Finally, we must make cost assumptions. Notice the bottom line: the very low cost per response with rules-based NLP is at the root of many poor decisions. NLP is most susceptible to complexity, given the rules-based approach and the expense of tuning the solution to make it operational. We use the following industry standards in our assumption model:

- Semi-automated: 30 cents per response

- Machine learning: $50 per hour for tuning; two hours of customization per code; 15 cents per response

- Rules-based NLP: $125 per hour for tuning; 10 hours of customization per code; 10 cents per response

To complete the comparison and evaluation, we look at maturity, the intersection of volume, complexity and cost over time. As the intersection shifts, the optimal technology solution may change over time as well.

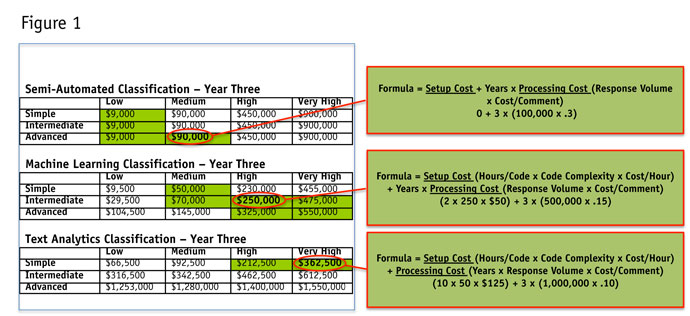

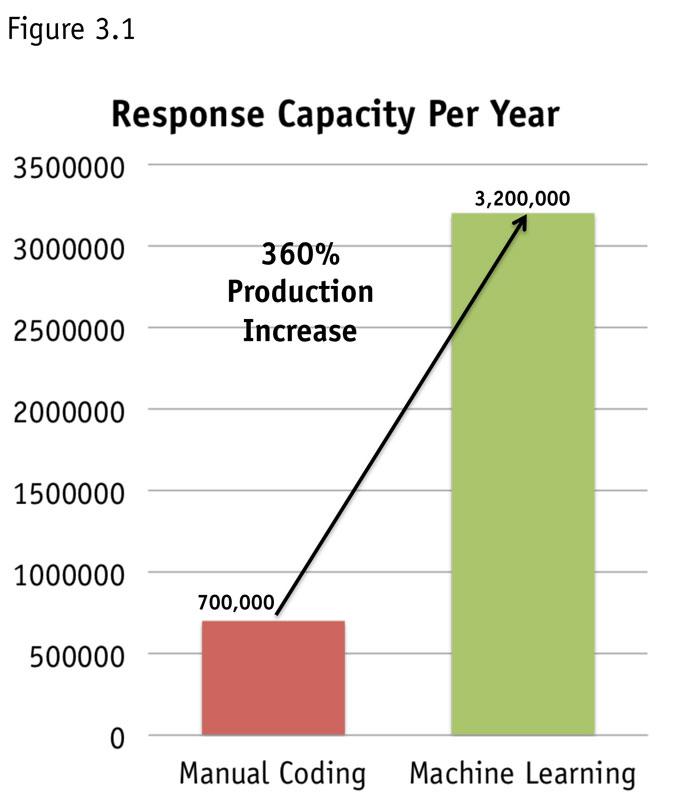

Select a set of time horizons; we used one, three and five years. For each horizon, compare the three potential technology solutions from a cost perspective in a set of 3x4 grids based on the above categories of complexity and volume. See Figure 1, in which the green highlighting shows which combinations the selected solution would “win” from a cost perspective (calculated using the cost assumptions outlined above).

The grid shows relative cost advantage for each technology solution for each volume/complexity combination in year three. So, if the company receives a high or very high volume of simple customer comments per year, the optimal year-three technology from a cost perspective would be rules-based text analytics. But other volume/complexity combinations would favor either semi-automated or machine-learning technologies from a cost perspective at that point.

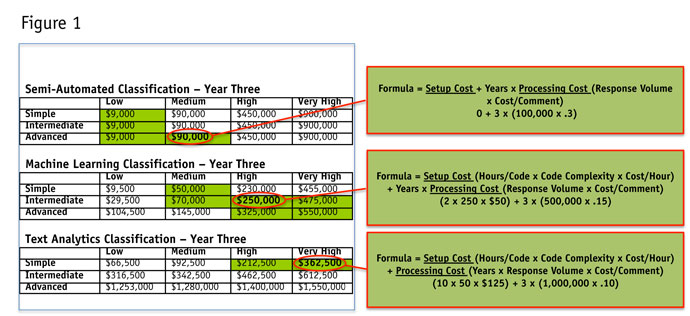

In Figure 2, we summarize the above analysis by “scoring” the winning technology in each combination for each time horizon. So in year three, semi-automated “wins” in six of the 12 volume/complexity combinations, and so on. Layering on the one- and five-year analysis, we see that NLP text analytics alone only ever “wins” versus semi-automated classification or machine learning solutions at high and very high volumes of comments, and even then only with simple coding scenarios. Machine learning becomes very attractive in three-plus-year projections, while semi-automated becomes relatively less cost-efficient after the first year for most scenarios.

Of course, each different organization will have a unique set of circumstances to evaluate and accommodate. The above analysis clearly shows the risk associated with locking in to a single classification technology platform, as well as the opportunity associated with a more fluid set of options. Following are three case studies to further demonstrate the nuance of the risks and opportunities.

Longer to obtain

On top of the lack of adaptability over time as costs and benefits shift, and the tricky nuances of various comment volume/complexity scenarios, what the single-classification technology platform provider probably won’t cover is the time and cost involved in initial setup and code-tuning. Without that, quality and productivity suffer, but with it, costs quickly outweigh benefits in many scenarios. Either way, your ROI takes much longer to attain or might never materialize as promised.

“Beware of claims advertising ‘the best text analytics tool’ as, in reality, the best text analytics tool will vary from organization to organization. Because these tools process your specific unstructured data, do not select a tool without first testing it with your data,” warns industry sage Bruce Temkin. (“Text analytics reshapes VoC programs,” page 13, May 2014)

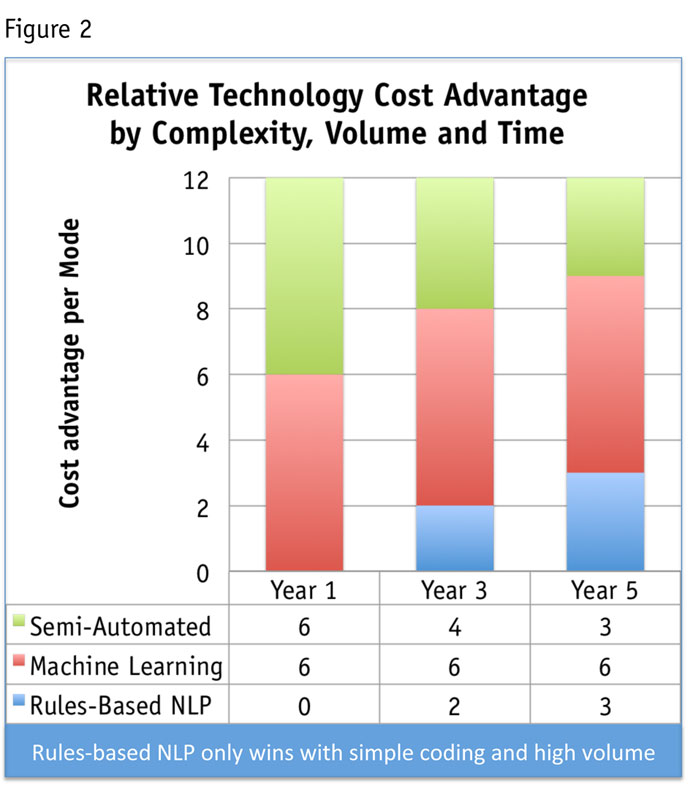

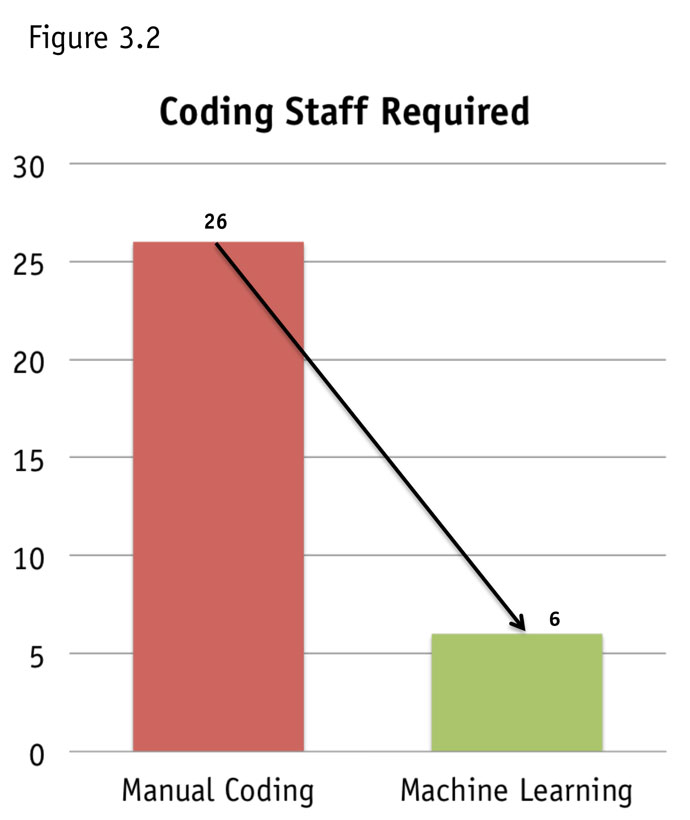

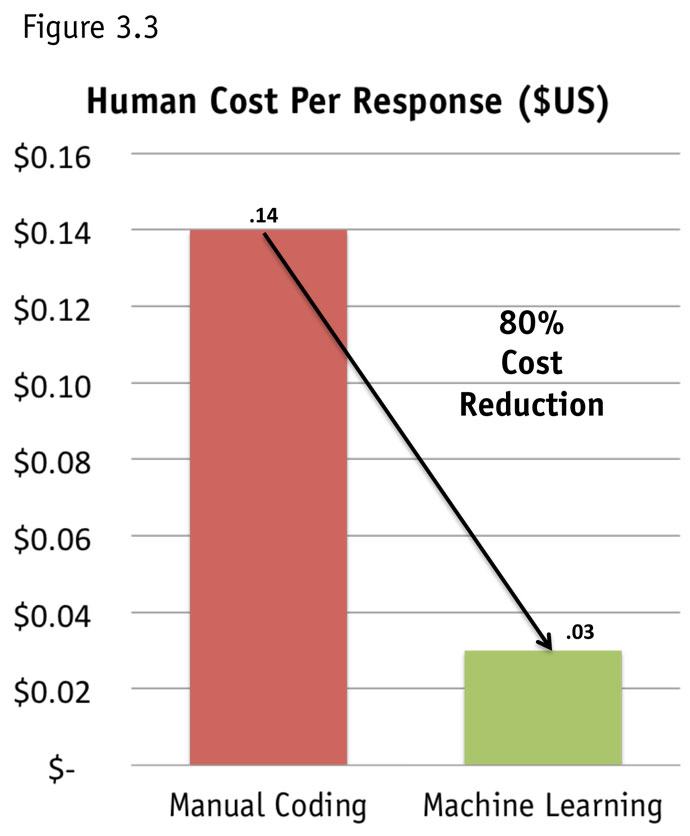

To illustrate both the issue and the opportunity of shining the light on the key hidden costs, consider an eye-opening case. A $40-billion global entertainment company with a daunting task of categorizing 3.2 million verbatim comments per year invested in a classic rules-based text analytics solution. With the promise of automation and a goal of reducing operating costs, the firm cut its staff from 26 to six based on the projected capabilities of the software. After two years, the initiative was not successful: The company was left with a reduced coding staff able to process only 700,000 responses a year, when the company needed to handle five times that amount, not to mention lack of detail or precision necessary to fully pull meaning from the comments.

Scenario analysis up front and broader technology options could have saved both time and money. Hindsight clearly reveals that in this case, automated text analytics alone was not the answer. The real solution was a combination of semi-automated and machine learning, in essence, a facilitated manual start with an automated finish. In this example, the program imitated manual coding at the rate of tens of thousands of comments per hour through a learning metaphor – basically learning by example. Productivity improved by nearly 400 percent and human costs were reduced by 80 percent with accuracy scores between 85-95 percent (see Figures 3a, b, c).

‘Complete paradigm shift’

Further illuminating the limitations of a one-dimensional platform approach versus the possibilities of a multi-dimensional approach, Safelite AutoGlass has driven “a complete paradigm shift” in the company’s decision-making with the portfolio approach as the underpinning, according to Kellan Williams, customer and quality analytics manager at Safelite.

Williams set out with a challenge to interpret the textual comments about reasons for high or low scores among the 500,000 survey responses the company collects annually in its customer satisfaction survey and to link them to other data like Net Promoter Scores. An initial attempt to use standard text analytics software proved inconsistent. Instead, the firm dramatically upgraded by starting with text analytics software and applying sentiment analysis and automated text categorization methods to these open-ended responses. This combined approach delivered results within a week of adoption, entire datasets processed and analyzed within hours rather than days or weeks and at last, truly actionable NPS data (see Figure 4).

Unlike some text analytics methods, which essentially perform a new analysis each time, Williams advocates applying the same analytical framework, improving this incrementally every month. “Being able to have consistent methodology and consistent algorithms that are applying the sentiment is really what has given me the ability to have actual insights out of the data,” he says. “We are definitely making decisions based on text analytics that are improving our customer experience. We have the whole picture of our customer and we are not doing anything extra to touch that customer. It’s data we already are gathering. It’s like doing a bunch of research projects or focus groups within one dataset.”

Capitalizing on real opportunities

It is not just in resolving issues where the multi-technology approach wins; it is also in capitalizing on real opportunities. Consider a more complex scenario, with research data taxonomy designed to capture every possible aspect of customer experience. Research firm Market Strategies had just such a challenge. With a goal of automating as much of the work as possible without sacrificing quality, Market Strategies capitalized on the opportunity to utilize all three text analytic technologies: NLP, semi-automated coding and machine learning. The result was powerful, with a 95 percent reduction in labor hours, increased productivity by a factor of 21 and enhanced quality control.

Combining the three analytical methods allowed Market Strategies to bring the voice of the customer into the heart of its client’s analysis of both market research and enterprise feedback management data.

Apply the right tool

In cases where the platform focuses solely on a single text analytics approach, there follows a mandate for the client to standardize on the provider’s platform, often requiring complete replacements of existing systems and processes. A good analogy to this situation is the familiar saying: “When the only tool in your belt is a hammer, every problem tends to look like a nail.” With a multi-technology approach, customers can apply the right tool, or combination of tools, to the project at hand and thus deliver an efficient and effective solution.

Expanding your options to take advantage of an integrated suite of technologies allows you to keep your ears open to the customer, rather than getting stuck in the lull of simple white noise in a set collection-and-analysis platform. Even with a state-of the-art collection tool, the voice of the customer speaks loudest and most clearly outside of those boundaries. There is real power in this amplified ability to meet the customer where they are, rather than expecting them to conform to some other process and solution. It reveals the possibilities of both understanding and delivering the world through insightful application of compound data sets and multi-technology solutions to capture nuanced, actionable VoC and drive material ROI from effective and efficient CEM.