Fairgen's position on digital twins

Editor's note: Samuel Cohen, Ph.D., is the CEO and founder of Fairgen, an AI company building the infrastructure for simulated audience research. He can be reached at samuel@fairgen.ai.

The decision dilemma

Imagine a mid-sized consumer brand finalizing the creative for a major seasonal campaign. The media buy is locked in, but the marketing team is split between two distinct messaging concepts. One emphasizes value; the other leans heavily into lifestyle aspiration. The launch is in five days. A traditional focus group or quantitative survey would take weeks to field and analyze, and the budget for this quarter's primary research is already depleted. The result? The team makes a multi-million-dollar bet based on the loudest voice in the room.

This scenario plays out daily across industries. Modern marketing and product teams are making decisions at an unprecedented pace, yet most of those decisions lack timely customer input. Much of the waste in marketing budgets stems not from bad data but from the absence of any data at all – decisions made on instinct because the alternative was too slow or too expensive.

The traditional choices have always been binary: spend $20,000 and wait six weeks for a proper field study or simply go with gut feeling. Most decisions inevitably default to the latter. This creates what we might call a "validation gap" in the research stack. High-stakes decisions rightly receive the investment of traditional research. Micro-stakes decisions can rely on experience. But medium-stakes decisions – like optimizing ad messaging, testing a positioning concept or exploring pricing scenarios – are too critical for raw AI, yet move too fast for traditional consulting. This is the high-stakes middle ground where market leaders are made or broken.

Beyond synthetic: Defining the digital twin approach

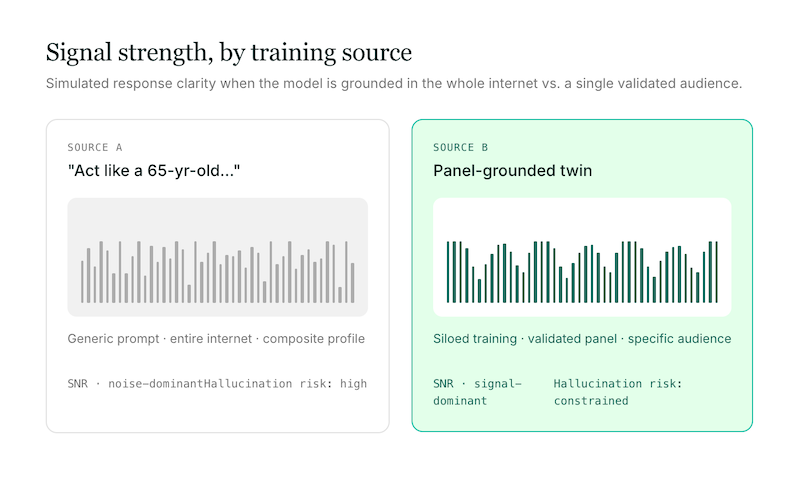

The term "synthetic data" has generated considerable heat in the consumer insights world, and not always for the right reasons. It is important to distinguish the digital twin approach from generic synthetic data generation or using ChatGPT for research. While simple forms of synthetic data have been used for years to test statistical models, generating sophisticated data that varies across dimensions in human-like ways requires more than just prompting an LLM to "act like a 65-year-old female living in the South." Profiles of such consumers can vary enormously with respect to almost any attitude or behavior. The signal from a generic prompt is weak because it draws on the entire internet rather than on a specific, validated audience.

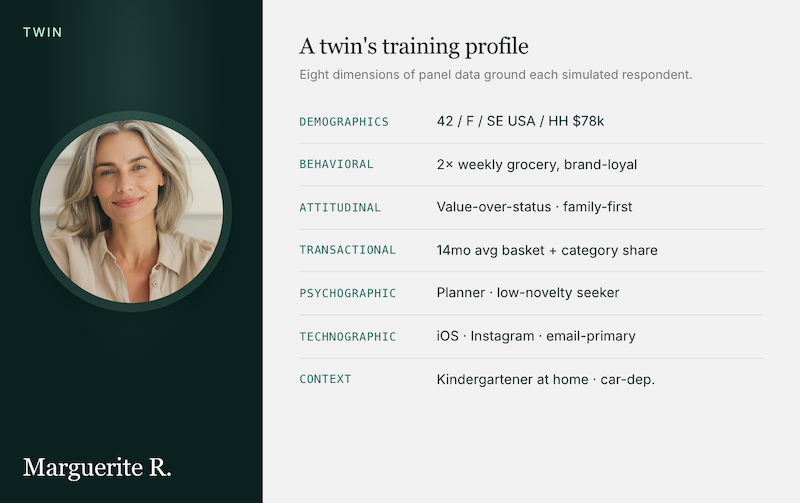

A true digital twin takes a fundamentally different approach. It is a one-to-one simulation built from the deepest data available, grounded in multidimensional panel data to ensure the simulation reflects a specific, real-world audience rather than a broad composite. These digital twins are constructed using comprehensive data profiles spanning demographics, behavioral patterns, transactional data, attitudinal sentiment, firmographics for B2B applications, psychographic mind-set data, technographic usage patterns and contextual geography and life stage information.

By grounding the simulation in specific, real-world panel data for a given audience and category, the resulting insights offer a distinct signal. The digital twin is trained on your specific audience, not the whole internet. Researchers can either access pre-built audiences from a marketplace of leading research data providers or build a proprietary audience by uploading their own past studies to train siloed, company-specific twins.

Where simulation excels in practice

Digital twins can function as a rapid feedback mechanism, providing directional insights in minutes rather than weeks. This capability unlocks several specific use cases that typically fall into the validation gap.

In ad and messaging optimization, teams can quickly test ad variations to maximize engagement and reduce customer acquisition costs before committing media spend. For concept testing, researchers can gather actionable feedback from target users to validate concepts early, ensuring alignment with market needs and minimizing development risk without the lead time of a traditional focus group. In pricing and packaging, product teams can experiment with various strategies and combinations to pinpoint optimal price points before a market launch.

Across all these scenarios, the methodology allows for both quantitative scale – deploying structured surveys to hundreds of digital twins to gauge broad sentiment – and qualitative depth, engaging in one-to-one chats with individual twins to probe the "why" behind their answers. This dual capability is what distinguishes the approach from both traditional surveys, which offer scale but limited depth, and traditional qualitative methods, which offer depth but limited scale.

Honesty in application: Addressing the skepticism

The insights industry is understandably skeptical of tools that claim to replace human respondents, and that skepticism is healthy. The most important distinction insights professionals must make is that digital twins are not a replacement for real field research. They are best understood as a powerful complement to traditional methods – a "second-best to fieldwork" option that provides a strong directional signal when primary research is not feasible.

Digital twins provide directional insights, not definitive conclusions. In a conservative industry full of vendors who overclaim, that honesty is not a weakness – it is a differentiator. Furthermore, robust digital twin platforms address the risks of data bias and "hallucination" by strictly siloing the training data. Unlike open-ended LLMs that pull from the entire internet, purpose-built digital twins are constrained by the specific, validated panel data they are trained on. When a twin responds to a concept test, its reaction is shaped by the real profile of the respondent it was modeled on – not by the statistical average of the internet.

Researchers should approach digital twins with the same rigor they apply to any methodology. Simulations should be viewed as what you do before and after field research – a way to keep the customer at the center of everyday decisions, while reserving rigorous fieldwork for the highest-stakes questions.

Modernizing the research stack

By integrating digital twins into their toolkits, insights teams can eliminate the friction that prevents them from fully executing their vision. They can deliver timely, directional insights that align with the pace of modern business operations, without compromising the integrity of their research practice.

Digital twins do not replace the expertise of the human researcher; rather, they can help researchers become faster, more agile partners to marketing and product teams. Imagine a future where the research team is no longer a bottleneck but a proactive strategic partner, able to provide immediate, data-backed guidance on Tuesday's ad copy debate without derailing the budget for next quarter's foundational segmentation study.

By closing the validation gap, we can finally move away from the binary choice of expensive studies or gut feeling. The voice of the customer deserves to be present in every decision that matters – not just the ones we can afford to study the traditional way.