Editor's note: Manila Austin is vice president, research, at Boston research company Communispace. She can be reached at 617-316-4118 or at maustin@communispace.com. This article appeared in the January 9, 2012, edition of Quirk's e-newsletter.

An often-cited concern about doing research via online communities, fan pages or Twitter is that ongoing, long-term interaction between consumers and brands opens the door to the risk of heightened brand awareness and affinity, to a sample that is informed and engaged rather than pristine and typical. As market researchers, we worry that data may be biased - that somehow through the peculiarities or limitations of our methods, or through lack of care, we introduce systematic error into research results. And certainly, online communities and other collaborative, social settings are inherently uncontrolled environments in which to conduct consumer research. By design, they let the consumer lead the way and provide an involving, interactive experience. But must this necessarily lead to bias?

I would like to explore several facets of this question: How severe is the bias issue, really? What kinds of bias are the most troublesome? And what are market researchers to do about it?

I begin by presenting research that sheds light on the relationship between engagement and bias and then conclude with some advice for practitioners about the kinds of trade-offs we all need to be thinking about as we forge ahead in making research engaging and actionable.

Online communities as a research setting

Of particular concern are the demand characteristics of online communities as a research setting. Because they are intentionally uncontrolled environments in many regards, it is easy to focus on how easily bias can be introduced into any study. People may behave differently because they know they are being studied (i.e., the Hawthorne effect); interact with and be influenced by community facilitators (i.e., Rosenthal effect); and become sensitized to the research over time, improving their performance in any number of ways (i.e., the practice effect).

These are all reasonable red flags but they are also the cost of doing business when you want highly-engaged, committed, motivated and honest participation in your research. And that is an important trade-off to understand. By design, online communities risk fewer controls in research for the reward of deeper and ongoing engagement. When research participants are engaged the quality of their involvement goes up, as does the quality of their output: They work harder, they share more and they stay engaged in the research for longer.

So the question we should be asking ourselves is not if data from online communities is biased; rather, in what ways might data be biased, how important is that to a given research question and do the potential rewards outweigh the risks?

Two truths

Over the years, we have conducted a number of research-on-research studies to explore the drivers and effects of engagement in private, online communities. In very broad strokes, our data suggest two truths, which are seemingly at odds: 1) engagement in online communities builds brand-consumer (or company-customer) relationships and 2) community members remain candid and critical despite their relationship with the brand.

Intuitively, it makes sense that community members would come to trust and care about the sponsoring brand as their experience in the community grows. They engage in weekly brand-related discussions, upload pictures and videos of themselves and their families and often serve as the eyes and ears of a brand, scoping out the marketplace and reporting back.

Explored the relationship

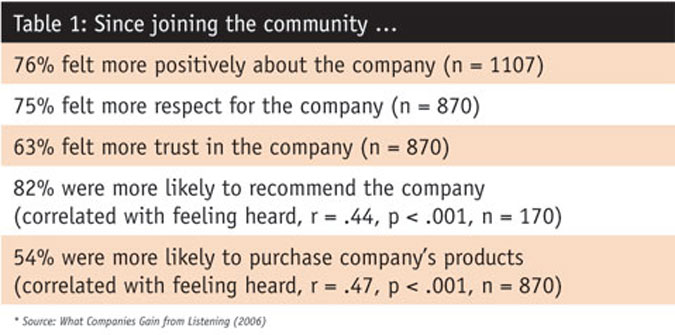

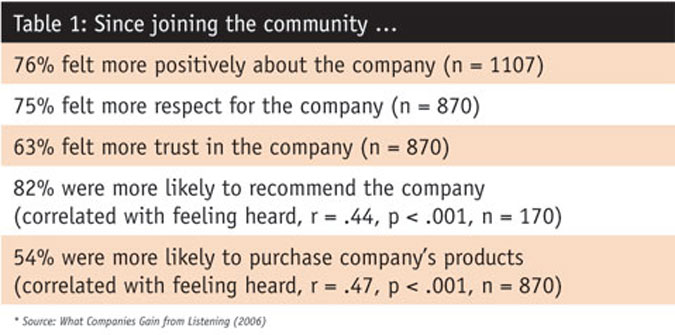

Communispace formally explored the relationship between engaging in online communities and members' feelings toward a brand in a 2006 study, What Companies Gain from Listening, which included over 2,000 members from 20 different communities. As we would expect, this kind of ongoing involvement with a brand does build relationship. In particular, we found that the vast majority of members surveyed felt participating in their online community had positively changed how they felt about the company that sponsored it - more positive regard, greater trust and increased respect. Community members also said they were more likely to recommend the sponsoring company's products or buy them themselves, as a result of belonging (see Table 1).

But what aspects of community membership drive these self-reported increases in positive feelings? We conducted several correlation analyses to answer this question and found that "feeling heard" was positively and significantly related to intent to purchase and likelihood to recommend.

Given these results, which were in line with our hypotheses at the time and still hold true today, it is only natural to assume that community members' opinion of a brand's products and services would become more positive over time. A logical conclusion, perhaps, yet not necessarily a true one. Our research provides evidence to suggest the opposite, in fact.

A second look

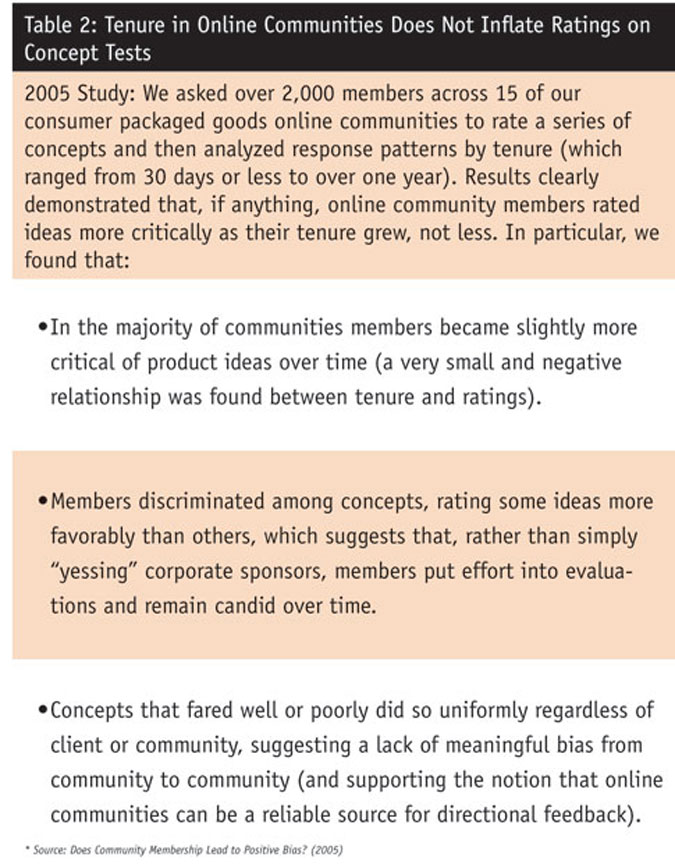

We recently updated one of our 2005 white papers, Does Community Membership Lead to Positive Bias? In it, we explored the hypothesized relationship between long-term, ongoing engagement in our online communities and inflated ratings on concept tests. The concern we were hearing at the time was that community members would become brand fans as a result of interacting with each other and with sponsoring companies over time and that, as brand fans, their feedback would be overly-positive, systematically skewed and untrustworthy.

In the intervening years we found no reason to doubt the veracity of the findings reported in the original study. Yet, in the fast-moving world of social media, 2005 seems like eons ago. So we took a second look.

Building off our 2005 methodology, we analyzed over 100 "standard" concept test survey questions (e.g., intent to purchase, appeal, believability, etc.), grouping responses into tenure groups. We tested for significant differences across groups and found nothing at all in over 99 percent of the cases. For the one significant difference we did find, the longest-tenured community members were also the most critical. In other words, the results of our 2011 analysis confirmed and supported what we learned in the earlier study: If anything, community members with the shortest tenure were more likely to rate concepts positively than were members with the longest tenure.

The rich, human experience

Quantitative ratings on product ideas and other concepts represent only a fraction of what can be done using online communities. The great value of opt-in, open-ended, ongoing formats for research is the opportunity to understand the rich, human experience that underlies the numbers; the "so what?" that sparks insight and drives innovation. Central to the value proposition of online communities, then, is the willingness and commitment of members to be candid and provide descriptive details about their lives.

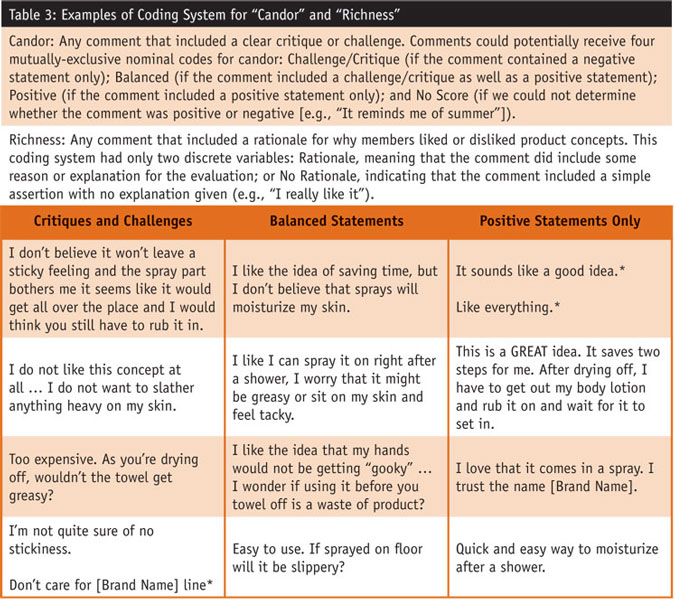

For this reason, it is important to think about bias broadly and consider how online community members may or may not skew what they say in open-ended formats. We did just that in our 2005 paper, examining the possibility that, as with quantitative ratings on concept tests, community members might become less critical in their text-based evaluations. In particular, we created two coding systems to assess candor (i.e., how critical or positive were responses) and richness (i.e., the extent to which members described their rationale in their responses). Table 2 describes our system (for which we established 100 percent inter-rater reliability) and provides a few examples where members were describing their assessment of a skin care product idea.

In this example, when we compared open-ended contributions from community members whose tenure was less than four months to those who had been community members for over one year we found no significant differences between the two groups. The percentages of candid and rich statements were virtually the same for both the shorter-tenured and longer-tenured members, demonstrating that the quality of online community members' feedback remained stable - qualitatively and quantitatively - over time (Table 3).

On one hand, we can see how community members' feelings toward a sponsoring company become more positive as a result of their engagement. Yet on the other hand, and as our 2005 and 2011 research shows, long-term engagement in online communities does not appear to make members less candid or critical; if anything it appears to have the opposite effect.

Understanding the trade-offs

So the answer to the initial question, which approach - transparent, long-term and personal or anonymous, one-time and impersonal - is most trustworthy, really comes down to understanding the trade-offs associated with each. Even in the most carefully-designed research studies it is impossible to construct perfect controls, making the fact of bias realistically unavoidable. In social media-based research this is even truer. Rather than bemoan this situation, researchers would be better served by thinking through which forms of bias are actually acceptable given the research question and business priorities.

In Communispace's 2010 paper, Leaving Our Comfort Zone: 21st Century Market Research, we identify a number of trade-offs market researchers should acknowledge and understand. Here are two that are particularly relevant for the question of positive bias:

-

Trade anonymity for transparency because transparency builds engagement. There is no doubt that when companies disclose their identity participants feel differently about the brand and the research process. When companies invite people into the fold, and when they demonstrate that they are truly listening, people respond in kind. But we must acknowledge, as well, that engagement is critical for quality. When people are engaged they try harder, they do and share more and go to great lengths for companies when they know who they are talking to.

- Trade distance for relationship because relationship creates candor. Similarly, we need to recognize that building a relationship with research participants can actually enhance data quality in many ways. When people know who you are and why you are asking them questions, and when they come to know you and trust you over a period of time, they become increasingly committed to being candid. We have seen that community members form reciprocal relationships with company sponsors. A mutual obligation develops, which compels research participants to be evermore truthful and companies to listen and respond candidly in return/

Candid and honest

Our research shows that community members remain candid and honest over time, despite many months of ongoing participation where they form relationships with one another and the sponsoring company. And rather than being undermined by this dynamic, we see our clients benefiting from it. Highly-engaged, long-term dialogue with a brand doesn't create risk, it mitigates it, by enabling an unparalleled level of specificity and candor in member feedback.

References

Katrina Lerman and Manila Austin (2006), What Companies Gain from Listening (Communispace white paper: www.communispace.com).

Manila Austin (2011), Does Community Membership Lead to Positive Bias? (Communispace white paper: www.communispace.com).

Manila Austin and Julie Wittes Schlack (2010), Leaving Our Comfort Zone: 21st Century Market Research (Communispace white paper: www.communispace.com).