Rethinking MaxDiff

Editor’s note: Morris Wilburn is president of Advanced Customer Analytics, in Lawrenceville, Ga. Wilburn has a master’s degree in sociology with a specialization in survey research methodology and additional master’s-level training in advanced statistical analysis from the University of Chicago. He has over 30 years of experience in quantitative marketing research.

In MaxDiff, respondents are shown a subset of items, such as product concepts or customer service attributes, and asked to identify the most and the least preferred. These questions yield the data that is analyzed by the MaxDiff software. To make this task more easily performed by respondents, some data collection programs ask the respondent to rank the items before making these decisions.

It is my understanding that none of the programs being used record those rankings for analysis. A valuable opportunity is missed here, because there is a statistical model, the rank-ordered logit model, that was designed to analyze ranking data.

Advantages of collecting and analyzing rankings

One advantage of collecting and analyzing rankings is efficiency in the data collection process.

To illustrate, if a respondent is asked only four questions, and asked to rank four product concepts in each, that yields 16 observations (lines of data) in the data file for analysis. Multiply 16 by the number of respondents. MaxDiff produces less than half as many observations per question.

By collecting more information per question, this is also an opportunity to reduce respondent fatigue. Another aspect of the fatigue issue is that evaluating and ranking four product concepts may be easier than evaluating five (or more) and identifying the most preferred and least preferred.

Another advantage of ranking is greater precision in measurement by the respondent. In reality, customer preference is not binary in form (do or do not want) – it is a matter of degree. By asking the respondent to rank the items, we address degree to some extent.

This has been discussed on internet forums for more than a decade. But collecting and analyzing ranking data has seldom been done. I believe that one of the main reasons is that software having the analysis capability referenced earlier (the rank-ordered logit model) is not easily accessed. Also, in every case of which I am aware, the software is difficult to use, with poor technical support being a common problem.

Fortunately, a work-around has been developed. It is an intricate set of binary logit models.

We recently used this work-around solution on a product concept study. There were seven concepts. The client had an existing product, and was trying to enter the market under study, and so it was included as an eight. It was unbranded and neutrally described. Including it has several benefits – for example, it established a known and relevant point of comparison for the client in evaluating the results of the analysis.

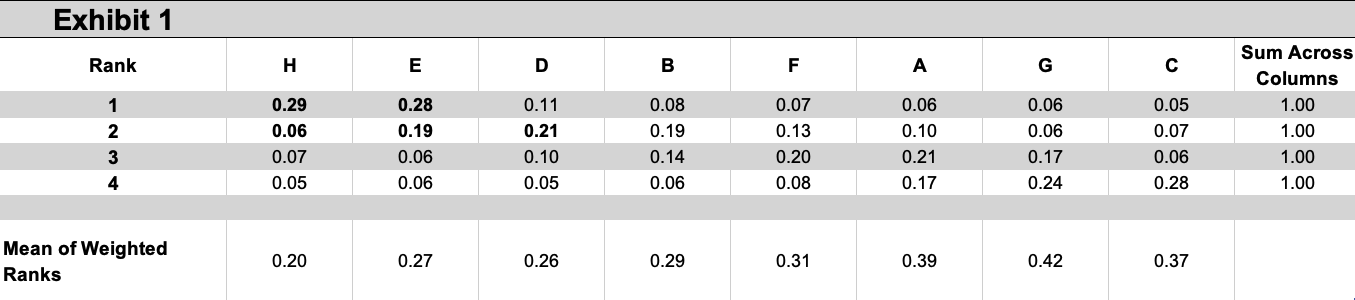

To construct this part of the questionnaire, we used experimental design methods to create an incomplete block design, with each block containing a combination of four items (product concepts). Each block was used as a question in the questionnaire. Each respondent was exposed to two of these blocks, independently of each other, and asked to rank the four product concepts in the respective block in terms of likelihood of trying. The results of the analysis are shown in Exhibit 1.

The first row contains the probability of each concept being ranked first. The second row contains the probability of each concept being ranked second. And so on.

In looking down a given column, we can see the probability of the respective concept being ranked first, second, third and fourth.

If we only looked at the first row, it is obvious that if the client had to select only one concept – which is not necessarily the case – it would be either concept “H” or “E.” Their values are distinctly higher than those of the other concepts. But which one? Their values are very close to each other.

We can gain insight into answering this question by examining the second row. Concept “E” has a probability of being ranked second of .19. (By random chance, it would have a probability of 1 / 8 = .1250). This is not a coincidence: In the data, respondents who ranked concept “H” as first were more likely to rank concept “E” or “D” as second. This is why the probability of concept “H” being ranked second is so low.

Depending on the goals of the study, we may need to summarize these 32 probability values. One way we can do so is by developing “one” measure of the overall attractiveness of each concept. In this study, we calculated the mean of the weighted ranks for each concept – using concept “H” to illustrate, the mean of four values: 1 x .29, 2 x .06, 3 x .07, 4 x .05. (Values with eight decimal places were used to perform these calculations.)

In reviewing the mean of the weighted ranks, remember that a low value is “good.”

Depending upon the goals of the study, it would be unwise to disregard Exhibit 1 because of Simpson's paradox – the product concept having the best (lowest) mean rank is not necessarily the product concept that is ranked "first" most often.

Much more could be said here. For example, this could be the basis of a simulation program.

Perhaps the main advantage of this approach is that it lays the foundation for an analysis into why respondents ranked the concepts the way they did. This analysis generates probability values for each concept at the respondent level. Respondent-level information such as membership in a market segment can be included in the model, and consequently the probability values generated by the model reflect that membership, and all the information that membership contains. This would make the generated probability values a very rich dependent variable in a driver analysis.

Little of what has been written here is new. In 2013, in several forums, Joel Cadwell made many of these same arguments for the rank-ordered logit model. But the obstacle to moving forward has been software to perform the analysis. This modeling work-around probably has limitations that we are not aware of, but this seems to have much potential.