Editor's note: Doug Berdie is president of Consumer Review Systems, a Minneapolis research firm.

Long ago, a client asked me, “What’s the use of paying money to conduct research if no one believes the results and the report sits on a shelf collecting dust rather than stimulating action?” Over 40 years as a marketing research vendor has convinced me that failure to acknowledge the importance of this question and to address the underlying concern still results in the underutilization of marketing research data.

Three of the most useful ways to increase the credibility of survey-generated data within marketing research are summarized below. They make sense and are easy to implement. In addition to increasing credibility and, hence, the likelihood that something will be done with the data, implementing these tips also improves data reliability and adds additional depth and insight to it.

Tip #1: Consider using the combination “sample and census” method.

One way research credibility is diminished is when a portion of the population is not surveyed and the complaint is made that not everyone had a chance to express an opinion and, therefore, the results must not be accurate. This lament, which arises from distrust of any sampling procedure (no matter how well it is designed and implemented), diminishes the credibility of almost all types of research where sampling is used. Employees who may not have received an invitation for an employee survey often make this complaint, as do sales personnel and branch offices, who lament that, “You didn’t survey my customers. How do I know the results apply to them?”

To address this concern, in research situations where this challenge to credibility can be anticipated, consider using the “sample and census” method of surveying. This method consists of the following process:

- Define the population (and relevant subsets) to which conclusions must be made and select an appropriate random sample – marking this sample as the randomly selected sample.

- Make the survey available to everyone in the population (those in the randomly selected sample and those who are not in that sample).

- During the research data collection, devote major effort to obtaining a very high response rate from the randomly selected sample and do not use valuable resources trying to stimulate response from the other people who were offered an opportunity to participate in the survey.

- Use the collected data from the randomly selected sample for quantitative data analysis and as a basis for conclusions of a quantitative nature.

- Compare the summarized, quantitative results from the randomly selected sample to other survey responses to see if they vary and by how much. (You will find that they rarely do vary by much – and that is comforting.)

- In the final report, use the verbatim responses from both the randomly selected sample and the other respondents to provide insights and quotations that support the quantitative findings.

Following is an example of the sample-and-census method. A retail company with 6,000 outlets spread among nine regions wishes to assess customer satisfaction. Its key strategic focus is at the regional (not outlet) level. The “sample” part of the sample-and-census method consists of targeting 350 completed surveys per region (total = 3,150). To obtain this number of completed surveys, the company decides to phone 530 customers per region, randomly distributed among the regional branches. The company has opted to use an extensive phone follow-up procedure along with an option to complete the survey online and, based on its previous experience, is confident this approach will yield an approximate 60 percent response rate and about 350 completed surveys per region. The “census” part of the method consists of placing placards at each of the 6,000 outlets inviting customers to go online to take the survey in that manner.

This approach minimizes the costs associated with obtaining statistically reliable data because it limits the expensive phone surveying and follow-up to the minimal sample needed to obtain the desired regional statistical reliability – while using the much less expensive online survey method to allow all customers an opportunity to give feedback. And, it heads off complaints from the outlets that might otherwise complain that, “You didn’t survey my customers so I’m not going to act on the survey recommendations.” In addition, by combining the verbatim responses from both the sample and the census groups, there will be insightful comments (many obtained from the census responses) that can be forwarded to individual branches to help them improve the service they provide – further heightening the credibility of the survey results.

Tip #2: Use the “core and idiosyncratic” approach to questionnaire design.

Many questionnaires are designed around the notion that everyone who receives the survey should be asked the same questions. Even in cases where surveys use skip patterns and use other question-funneling tactics, the general idea is that there will be one questionnaire. And, given the need to keep questionnaires to a manageable length, this typical approach means that each decision maker’s unique needs will not all be addressed by the survey. Credibility suffers when this traditional questionnaire design logic is used because, instead of the, ”You didn’t survey the people I deal with” complaint, a related complaint is expressed: “You didn’t obtain information about the issues that really affect me.”

The way to sidestep this credibility barrier is to use the “core and idiosyncratic” approach to questionnaire design. The “core” questions are those that are of interest to every decision maker who will be asked to act based on the survey results and the “idiosyncratic” questions are those that are of major interest to some decision makers and not to others.

Using the same example as above, let’s assume the questionnaire has two sets of questions. The core set consists of questions such as overall satisfaction, likelihood to recommend, satisfaction with general categories of the customer experience (e.g., sales, ordering, billing, delivery, product performance, etc.) and necessary demographic/firmographic questions. There will likely be some more specific questions related to the general categories where feedback will be relevant to everyone (e.g., product reliability, salesperson knowledge, etc.). The idiosyncratic questions will be ones that apply to some outlets and not others and might include such things as, “ease of access from the freeway ramp,” “way [certain lines of products that may be only available at some outlets] are displayed” and sets of questions related to product installation (in cases where installation may only be offered by some outlets), etc.

Decision makers, then, are required to have their questionnaire contain the core questions and are allowed to select which of the idiosyncratic questions they wish to have asked.

The benefits of the core-and-idiosyncratic questionnaire method are that the data needed across the entire organization (that can be summarized for an organization-wide profile as well as a regional profile) are collected as core questions – helping meet one major objective of the research while, also, collecting specific, idiosyncratic data that help increase the buy-in from the individual outlets and lead to enhanced credibility of the results.

Tip #3: Use an “early funnel question” in the survey based on role played by the person being surveyed.

One objection decision makers offer for not believing survey results is exemplified by the following from customer experience research: “You asked everyone about each part of the customer experience. Hardly anyone deals with all aspects of the experience and, therefore, much of the feedback is from uninformed people.” Although this type of objection may be more common in business-to-business research, it arises in consumer studies as well: “In some families, it’s one partner who handles the purchase decision and another who handles the payments and product/service use. Hence, you don’t get informed opinions by asking everyone all questions.”

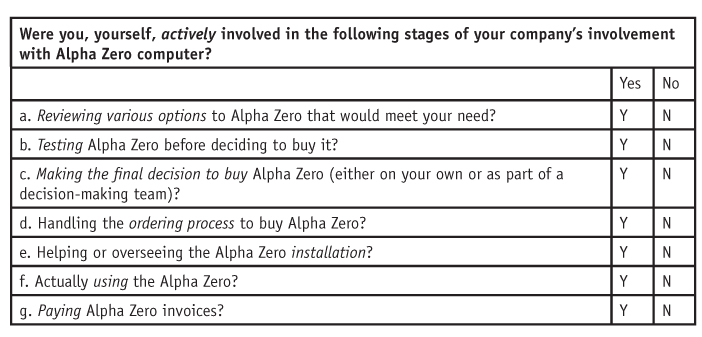

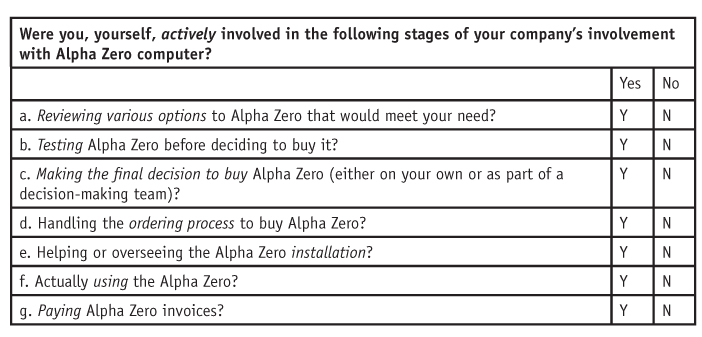

The simple way to overcome this credibility barrier is to ask an appropriate funneling question very early in the questionnaire that defines the areas in which the respondent is qualified to give informed feedback – and, then, to only ask that person for feedback in those areas. Although most surveys ask questions about job titles or place in the family, they do not directly ask about the specific role people play related to the products/services of interest. Not all purchase agents in companies, for example, play the same role. Some are actively involved in deciding which product/service is purchased while others only handle the paperwork. If one merely asks about job titles, in this case, one is forced to make assumptions about the actual roles played by various job titles – assumptions that are not necessary if the actual roles people play are asked about directly. Hence, getting information as to the exact involvement of people is critical. A sample funneling question of the type needed is shown here.

Each of the questions determines whether the respondent is asked the more detailed questions within each of those customer experience categories.

Sometimes, the type of question shown in the example is asked at the very end of the questionnaire (where it can be of no use in directing flow through the questionnaire) or it is asked without being linked to the flow of following questions.

Credibility is enhanced in a number of ways by using this early funnel question. First, decision makers are assured that only those who are really qualified to answer a series of detailed questions are asked those questions, i.e., the collected data is from informed, qualified people. Second, the quality of responses to the entire survey is maximized because the number of questions asked of any individual is limited to areas in which that person has direct experience. This decreases respondent fatigue and heightens the reliability of responses that are given. Finally, by not asking everyone every question, the shorter time needed to complete the survey increases response rates and decreases the deleterious effect of nonresponse bias.

Not deemed credible

It makes no difference how well a research project is designed and executed if the results are not deemed credible. Lack of credibility deters the creation and implementation of steps to capitalize on the collected information. This is true of all types of marketing research, from customer experience to branding to new product research to needs analysis to program evaluations, etc. It also extends to other types of organizational research that marketing researchers may be asked to conduct, such as employee surveys and internal communication surveys.

Some decision makers do not have much research savvy and, as a result, their arguments that specific research results are not credible may be ill-founded. This matters not, however, as they will, under these circumstances too, let the results languish on the shelf rather than act on them.

Fortunately, three easy ways exist to reduce/eliminate most reasons that lead to lack of credibility. These are: using the “sample and census” method to determine who receives a survey invitation; using the “core and idiosyncratic” questionnaire design; and using an “early funnel question” to determine the role of the survey recipient in the experience about which feedback is being requested, so that questioning can be limited to obtain informed responses.

Although not all three of these methods may be needed in each research project, considering them (and implementing them where appropriate) will increase the credibility of the results. And this, in turn, will increase the use to which results are put and enhance the marketing research industry’s reputation for providing valuable information.