Cross crosstabs off the list?

Editor's note: Tim Macer is managing director and Sheila Wilson is an associate at meaning ltd., the U.K.-based research software consultancy that carried out the study on which this article is based. The 2013 Confirmit Market Research Technology Report by meaning can be downloaded free of charge from meaning.uk.com.

The annual Market Research Technology Report turns 10 this year. Formerly known as the Market Research Software Report, its organizers felt a change to emphasize technology was more in line with trends reflected in the survey findings. Carried out by meaning ltd. and sponsored by Oslo, Norway-based research company Confirmit, it is the only survey on the market research industry that looks specifically at technology trends.

As interviews took place at the end of 2013 and into January of this year, the report published in March represents the 2013 survey. Encompassing the views of 240 companies in 35 countries, the survey is a combination of tracking questions that index trends over a period of years and questions of-the-moment, on topics such as: voice of the customer (VOC) and customer experience management (CEM); research using mobiles and tablets; a reprise of text analytics, which was looked at in 2011; and some 10th anniversary questions considering the previous and the next decade.

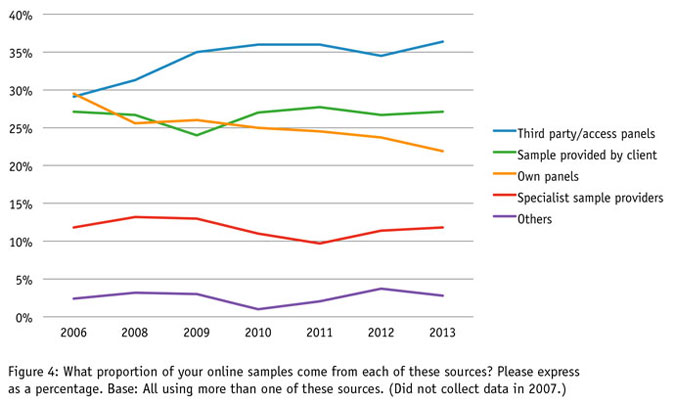

The latest survey finds that mobile research is now showing rapid growth, after a slow start. It charts an increasingly dominant role for access panels as the sample source for online research and reveals the virtual extinction of crosstabs as a client deliverable. The industry is embracing voice of the customer and customer experience management but only to a very limited extent.

As always, we are grateful to those who made this research possible. Confirmit, our sponsor; Quirk’s Research Media and the Japan Market Research Association for helping us to pick up some valuable participants; Ascribe, who analyzed the open questions; and of course all the kind people who participated in the survey.

Mobile research

Since the 2011 survey, we have asked companies to estimate how many of their online survey participants are attempting to take their surveys on mobile devices. This is not necessarily a reliable estimate, especially as 37 percent of those interviewed did not provide an estimate. Of those who did, the estimate has risen each year and now stands at 16.4 percent of survey starts. It is well known that the proportion varies from project to project and in response to the sample source used: It tends to be higher among client-provided customer samples and lower among panels.

Unless surveys are optimized for mobile participation – and generally, most still are not – that now means one in six survey participants may find they are excluded from the sample. What makes this a serious problem is the very different characteristics this subpopulation has. Unfortunately it means even more pressure on the survey at a time when enterprises are increasingly looking elsewhere for marketing insights.

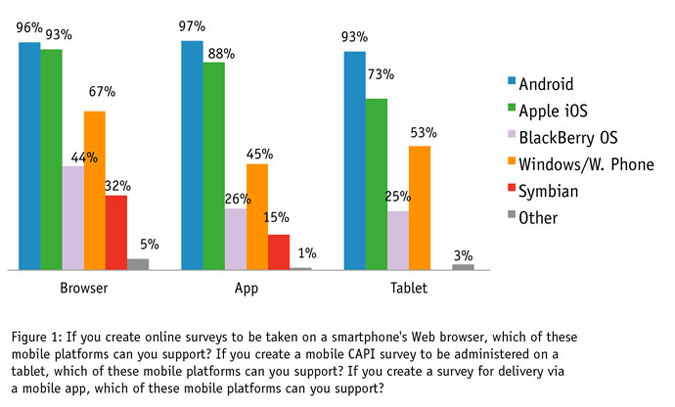

When mobile is the target of the research, the industry seems to be gearing up now: 70 percent of companies interviewed report they are able to run surveys on mobile with a browser-delivered survey and 42 percent can deliver surveys using a dedicated mobile app. A further 33 percent can mobilize tablet-based interviewing for interviewer-administered surveys.

We were interested in the specific platforms they could support; the results can be seen in Figure 1. Android and Apple iOS dominate, though Android appears to have the edge. This is more apparent when firms get to choose the hardware themselves for CAPI, where Android shows a 20 percent lead.

Voice of the customer

Customer service is one area where the survey and traditional research appears to be under threat. The 2013 survey looked specifically at how market research is responding to developments in the fields of customer experience management and voice-of-the-customer programs.

The survey found customer satisfaction, CEM and VOC was a major or increasing area of activity for 38 percent of companies and 71 percent did this type work, even if occasionally. Almost all of this subset of the sample (94 percent) offer customer satisfaction surveys but more novel methods are much less common.

True NPS surveys with just the two questions recommended by Fred Reichheld are used by 39 percent of those doing any c-sat or VOC work. Microsurveys such as those triggered by a QR code have achieved a foothold of just 17 percent. Customer communities languish with an 11 percent uptake.

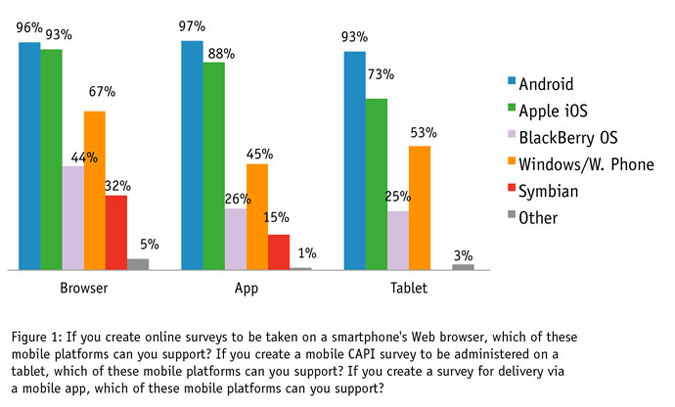

Most observers agree that another important advance found in CEM or VOC work is the integration with other sources of information available to the enterprise, from Web site traffic and social media activity to customer interactions logged at customer service centers. A question asking which delivery-type services companies provided showed that market research companies generally are not offering the level of integration that enterprises get from the specialist VOC or CEM consultants that many of them are now turning to (see Figure 2).

There are positives to be seen. Fifty-two percent of companies report satisfaction data alongside other corporate data and 40 percent will break out of the usual reporting cycle and send an immediate alert for direct follow-up. On the other hand, the very low level of engagement with social media (25 percent), call center logs (9 percent) and Web site traffic (Web logs, 8 percent) is worrying. If market research hopes to compete with the new breed of VOC or CEM consultancies, companies will need to embrace these more exotic sources of data and deliverables and provide the kind of integration that the consulting companies do.

Computer-based text analysis

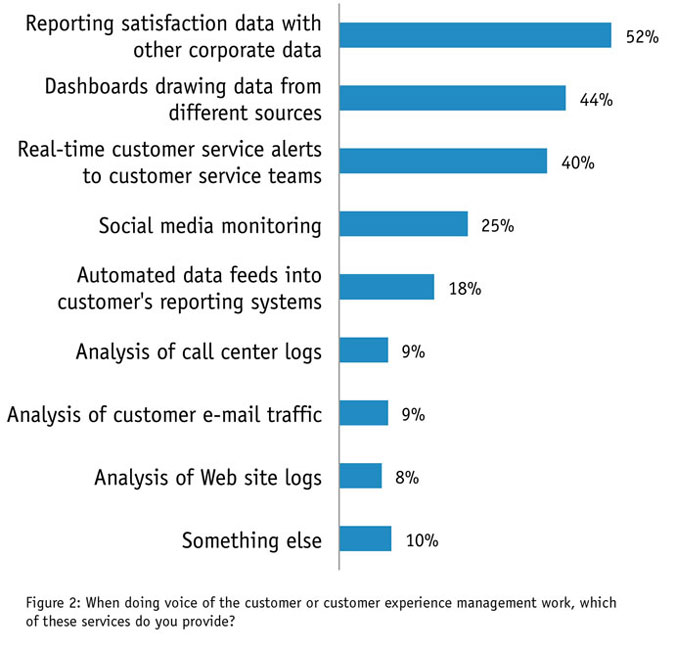

As the implications of big data continue to unfold, having better tools to process unstructured textual content would appear to be as important now as having a crosstab tool was 20 years ago. We asked some questions on the tools MR companies were using to help them sift through and analyze text two years ago, in the 2011 study.

At that time, we revealed an industry still heavily dependent on manual or “human” coding rather than the automated techniques used by the specialist social media analysis consultancies or in other industries, though we did find interest in using these tools in the future.

We repeated several of these questions in 2013 to see what progress had been made. It seems “the future” lies more than two years on. In 2013, the picture remains one where manual coding dominates and automated methods are rarely used (see Figure 3). However, the number of those with the faith that these tools will help them in the future has, if anything, declined.

The chief reasons cited for not using automated tools are (somewhat self-defeatingly) not having the right tools, along with concerns over quality of results from the tools and poor-quality data. It makes us wonder whether this is another area where research is finding it hard to move beyond the comfort zone of familiar methods.

To mark the 10th anniversary of the project, two questions probed what companies viewed had been the game changers and also the major disruptors. Well in the lead, on the positive side, was mobile, picked by 80 percent and ranked first by 50 percent. The biggest disruptor, by far, was the rise of the DIY survey tool (63 percent chose it; 30 percent ranked it first), followed by privacy concerns and mobiles displacing fixed-line telephony.

General trends

The other half of the study records a number of trends, a few of which go back to the start of the study in 2004. From 2006 we have asked about the volume of work done by different interview modes. It is a period in which online has consistently risen by a few percentage points, from 40 percent in 2006 until it reached 51 percent in 2011, where it has remained.

Online’s increase has largely been at the expense of CATI and paper. CAPI has also gained ground in recent years, thanks largely to mobile CAPI. But mobile self-completion is still barely making an impact: just 2 percent in 2013. The number of companies offering mobile has been rising though, from 6 percent in 2009 to 20 percent in 2013.

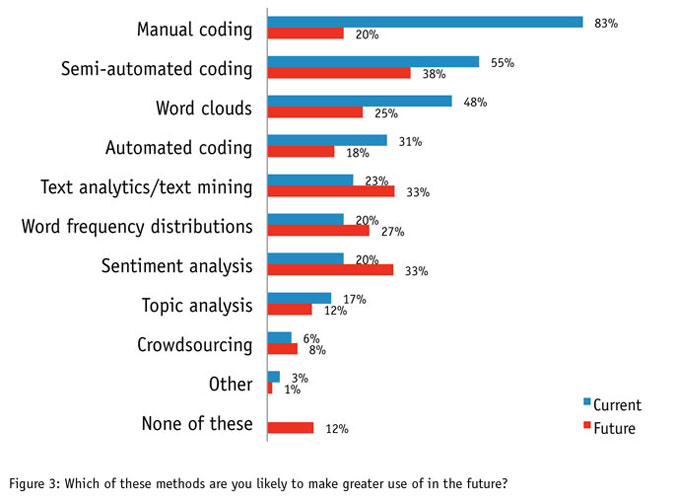

The survey has also tracked sample sources used for online research since 2006 (though not in 2007). Over the period, there has been a steady rise in reliance on access panels, which started at 29 percent of all surveys in 2006 and has now reached 36 percent (see Figure 4) but in the same period, the aspirations of companies to build their own panels appear not to have borne fruit. Their share has dropped from 30 percent in 2006, a whisker above the access panel, to 22 percent in 2013. In another question, companies have long predicted they will make less use of client-supplied samples in future. Like some badly-kept New Year’s resolution, the volumes have remained obstinately close to 27 percent throughout the whole period.

The battle of mixed mode

A few years ago, many pundits were predicting that multimodal research would become commonplace. However, the evidence has not been there: mixed-mode has plateaued at 6 percent or 7 percent of volumes for the last eight years.

Mixed-mode can mean several different things and another question asked each year has identified the level of mixed-mode support judged to be an essential in any software being used. Three categorizations are used, the simplest being a “common authoring” environment; a more complex variant is to allow a survey to be deployed to multiple modes in parallel; and the third, most technically demanding option allows interviews to switch between modes at will.

Figure 5 shows that over the years, opinions have varied widely but that in the last few years, common authoring has emerged as the most popular choice of all three. Not that software developers can relax quite yet: over half (57 percent) of companies want their software to support either parallel modes or mode switching.